5.4.10 Vision Voice Box

Feature Introduction

This section describes how to experience the full ASR + VLM/LLM + TTS pipeline on the RDK platform.

Code repository: (https://github.com/D-Robotics/hobot_llamacpp.git)

Supported Platforms

| Platform | Runtime Environment | Example Feature |

|---|---|---|

| RDK X5, RDK X5 Module | Ubuntu 22.04 (Humble) | Vision Voice Box Experience |

| RDK S100, RDK S100P | Ubuntu 22.04 (Humble) | Vision Voice Box Experience |

Prerequisites

RDK Platform

- RDK must be the 4GB RAM version.

- RDK must have Ubuntu 22.04 system image flashed.

- TogetherROS.Bot must already be successfully installed on the RDK.

- Install the ASR module for voice input by running:

apt install tros-humble-sensevoice-ros2.

Usage Instructions

RDK Platform

-

You can use the Vision-Language Model Vision-Language Model

-

You can use the TTS tool Text-to-Speech

-

ASR tool is already installed.

-

Connect a USB speaker with microphone (or a wired headset if your RDK product has a 3.5mm audio jack). After connecting, verify that the audio device is recognized properly:

root@ubuntu:~# ls /dev/snd/

by-id by-path controlC0 controlC2 pcmC0D0c pcmC0D0p pcmC2D0c pcmC2D0p timer

The audio device name shown in the figure should be "plughw:0,0".

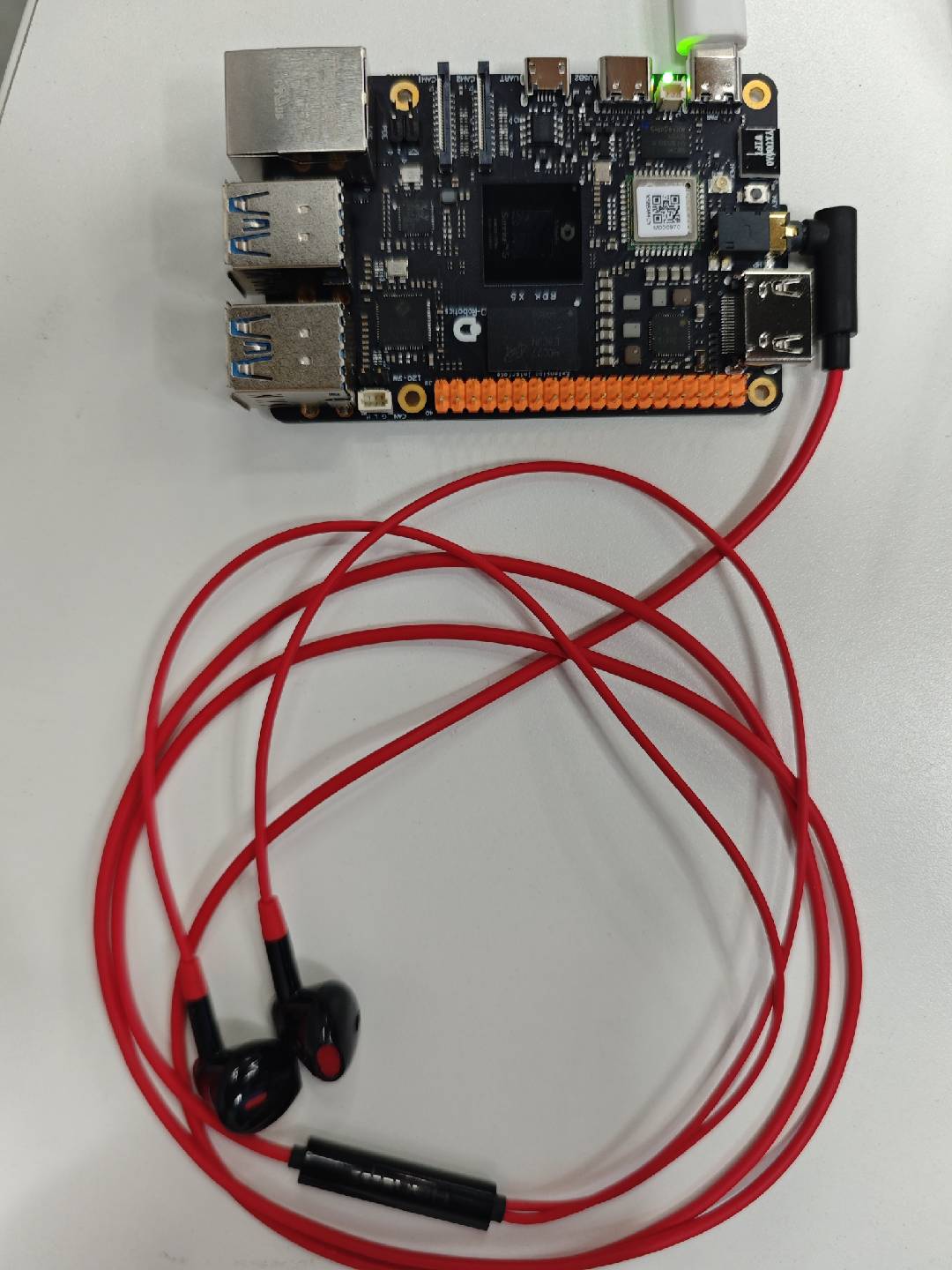

- RDK X5 Audio Connection

- RDK S100 Audio Connection

Usage Guide

# Configure tros.b environment

source /opt/tros/humble/setup.bash

- RDK X5

- RDK S100

Publish images using MIPI camera

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure MIPI camera

export CAM_TYPE=mipi

ros2 launch hobot_llamacpp llama_vlm.launch.py audio_device:=plughw:0,0

Publish images using USB camera

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure USB camera

export CAM_TYPE=usb

ros2 launch hobot_llamacpp llama_vlm.launch.py audio_device:=plughw:0,0

Use locally looped-back images

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure local image loopback

export CAM_TYPE=fb

ros2 launch hobot_llamacpp llama_vlm.launch.py audio_device:=plughw:0,0

Publish images using MIPI camera

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure MIPI camera

export CAM_TYPE=mipi

ros2 launch hobot_llamacpp llama_vlm.launch.py llamacpp_vit_model_file_name:=vit_model_int16.hbm audio_device:=plughw:0,0

Publish images using USB camera

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure USB camera

export CAM_TYPE=usb

ros2 launch hobot_llamacpp llama_vlm.launch.py llamacpp_vit_model_file_name:=vit_model_int16.hbm audio_device:=plughw:0,0

Use locally looped-back images

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

# Configure local image loopback

export CAM_TYPE=fb

ros2 launch hobot_llamacpp llama_vlm.launch.py llamacpp_vit_model_file_name:=vit_model_int16.hbm audio_device:=plughw:0,0

After the program starts, you can interact with the device via voice prompts. To use it: say "Hello" to wake up the device, then describe your task—for example, "Please describe this picture." Upon receiving the request, the device will reply "OK," and you should wait while it performs inference and outputs the result.

Example interaction flow:

- User: "Hello, describe this picture."

- Device: "OK, let me take a look first."

- Device: "This picture shows xxx."

Advanced Features

In addition to supporting Vision-Language Models (VLM), this package also supports using pure Language Models (LLM) for conversation:

# Configure tros.b environment

source /opt/tros/humble/setup.bash

cp -r /opt/tros/${TROS_DISTRO}/lib/hobot_llamacpp/config/ .

ros2 launch hobot_llamacpp llama_llm.launch.py llamacpp_gguf_model_file_name:=Qwen2.5-0.5B-Instruct-Q4_0.gguf audio_device:=plughw:0,0

After launching the program, you can interact with the device via voice prompts. Usage instructions: after the device finishes initialization, it will say "I'm here!" Say "Hello" to wake it up, then give your query—for example, "How should I rest on weekends?" The device will then start inference and output its response via speech.

Example interaction flow:

- Device: "I'm here!"

- User: "Hello, how should I rest on weekends?"

- Device: "Rest is important—you could read books, listen to music, draw, or exercise."

Notes

-

Regarding the ASR module: Even when no wake word is detected, the ASR module will still output logs to the serial console after startup. You can speak to verify whether audio is being detected. If nothing is detected, first check the device status and device ID using

ls /dev/snd/. -

Regarding wake-word functionality: The wake word "Hello" may occasionally fail to be recognized, causing subsequent commands to be ignored. If issues occur, check the logs for entries like

[llama_cpp_node]: Recved string data: xxx. If present, it indicates that text was successfully recognized. -

Regarding audio devices: It is generally recommended to use the same device for both recording and playback to avoid echo. If separate devices are used, you can modify the device name by searching for the

audio_devicefield in the file/opt/tros/${TROS_DISTRO}/share/hobot_llamacpp/launch/llama_vlm.launch.py. -

Regarding model selection: Currently, the VLM only supports the large model provided in this example. For LLM, you can use any GGUF-converted model from the Hugging Face community: https://huggingface.co/models?search=GGUF.