Codec

System Overview

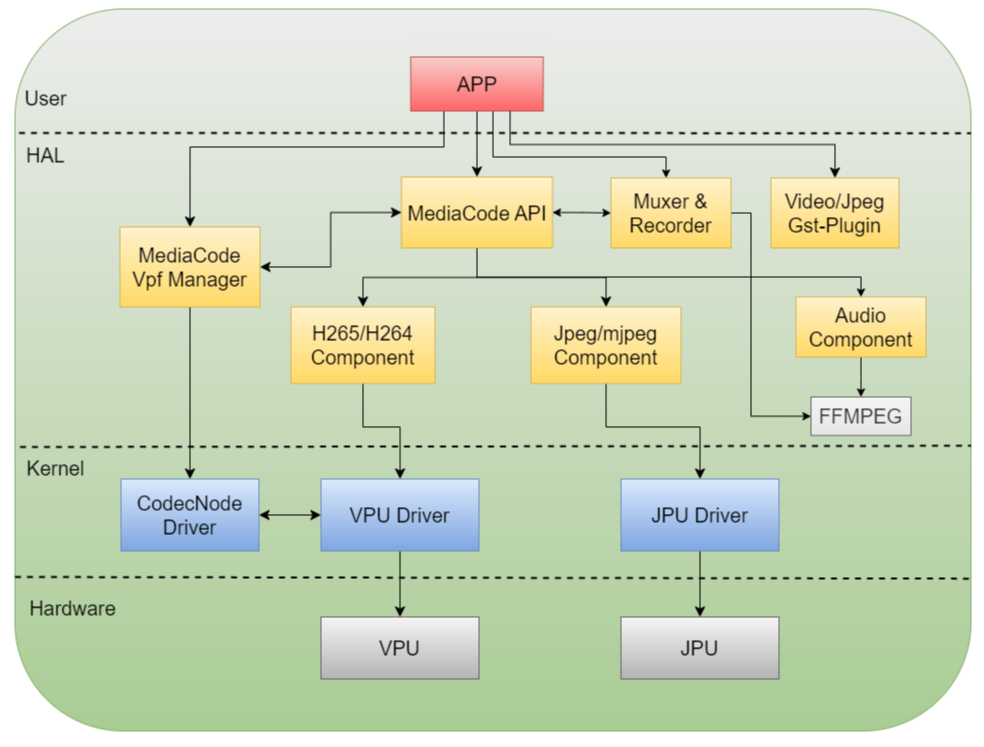

Overview

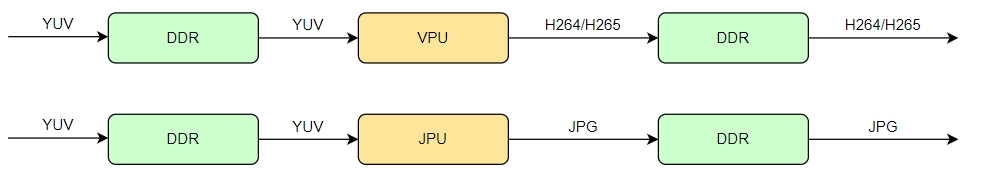

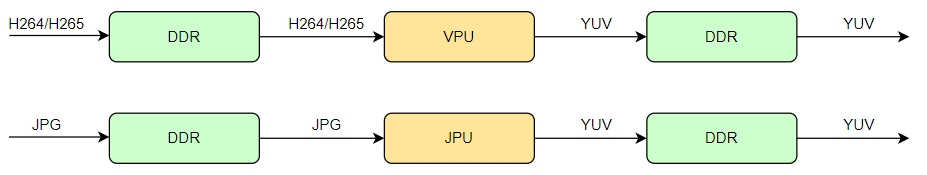

Codec (Coder-Decoder) refers to a codec used to compress or decompress media data such as video, images, and audio. The S100 SoC includes two hardware codec units: VPU (Video Processing Unit) and JPU (JPEG Processing Unit), providing 4K@90fps video codec capability and 4K@90fps image codec capability.

JPU Hardware Features

| HW Feature | Feature Indicator |

|---|---|

| HW number | 1 |

| maximum input | 8192x8192 |

| minimum input | 32x32 |

| performance | 4K@90fps |

| max instance | 64 |

| input image format | 4:0:0, 4:2:0, 4:2:2, 4:4:0, and 4:4:4 color format |

| output image format | 4:0:0, 4:2:0, 4:2:2, 4:4:0, and 4:4:4 color format |

| input crop | Supports |

| bitrate control | FIXQP(MJPEG) |

| rotation | 90, 180, 270 |

| mirror | Vertical, Horizontal, Vertical+Horizontal |

| quantization table | Supports Custom Settings |

| huffman table | Supports Custom Settings |

VPU Hardware Features

| HW Feature | Feature Indicator |

|---|---|

| HW number | 1 |

| maximum input | 8192x4096 |

| minimum input | 256x128 |

| input alignment required | width 32, height 8 |

| performance | 4K@90fps |

| max instance | 32 |

| input image format | 4:2:0, 4:2:2 color format |

| output image format | 4:2:0, 4:2:2 color format |

| input crop | Supports |

| bitrate control | CBR, VBR, AVBR, FIXQP, QPMAP |

| rotation | 90, 180, 270 |

| mirror | Vertical, Horizontal, Vertical+Horizontal |

| long-term reference prediction | Supports Custom Settings |

| intra refresh | Supports |

| deblocking filter | Supports |

| request IDR | Supports |

| ROI mode | mode1: Users can set multiple zones’(up to 64) qp value(0-51), should not work with CBR or AVBR mode mode2: Users can set multiple zones’(up to 64) important level(0-8), should work with CBR or AVBR mode |

| GOP mode | 0: Custom GOP 1 : I-I-I-I,..I (all intra, gop_size=1) 2 : I-P-P-P,… P (consecutive P, gop_size=1) 3 : I-B-B-B,…B (consecutive B, gop_size=1) 4 : I-B-P-B-P,… (gop_size=2) 5 : I-B-B-B-P,… (gop_size=4) 6 : I-P-P-P-P,… (consecutive P, gop_size=4) 7 : I-B-B-B-B,… (consecutive B, gop_size=4) 8 : I-B-B-B-B-B-B-B-B,… (random access, gop_size=8) 9 : I-P-P-P,… P (consecutive P, gop_size = 1, with single reference) |

Software Features

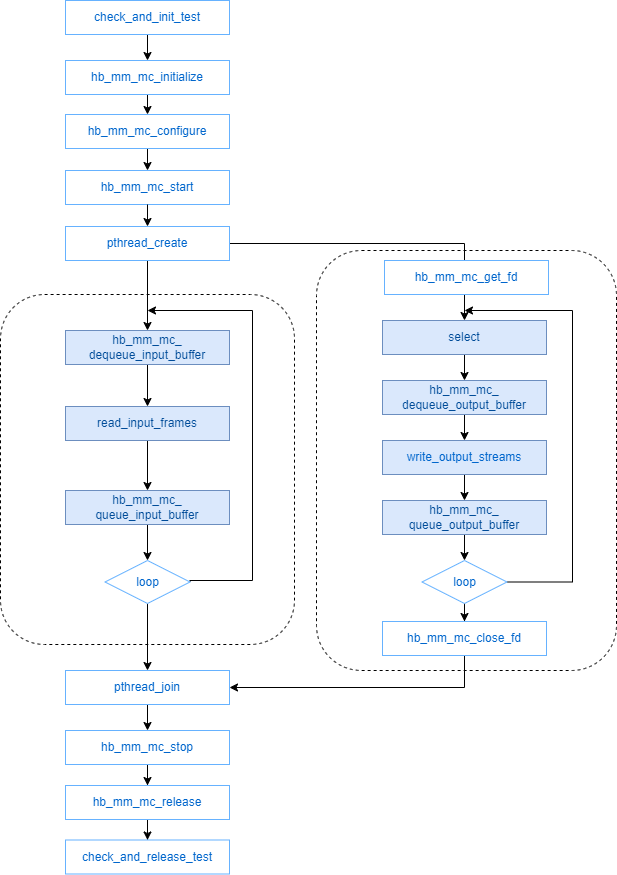

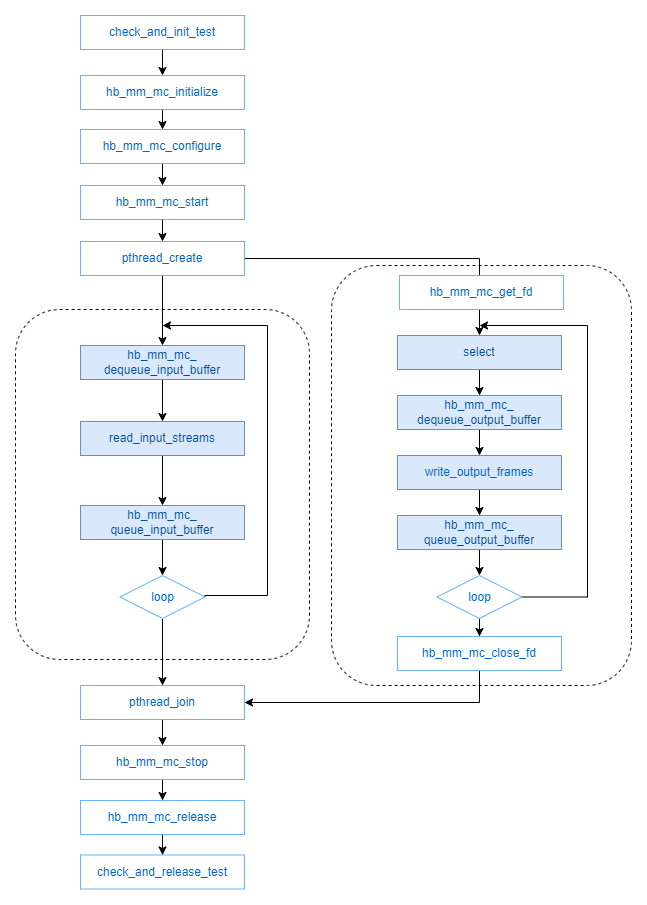

Overall Framework

The MediaCodec subsystem provides components for audio/video and image codec, raw stream packaging, and video recording. This system primarily encapsulates underlying codec hardware resources and software codec libraries to offer codec capabilities to upper layers. Developers can implement H.265 and H.264 video encoding/decoding functionalities using the provided codec APIs, use JPEG encoding to save camera data as JPEG images, or utilize the video recording feature to record camera data.

Bitrate Control Modes

MediaCodec supports bitrate control for H.264/H.265 and MJPEG protocols, offering five bitrate control methods for H.264/H.265 encoding channels—CBR, VBR, AVBR, FixQp, and QpMap—and FixQp bitrate control for MJPEG encoding channels.

CBR Description

CBR stands for Constant Bitrate, ensuring stable overall encoding bitrate. Below are parameter descriptions for CBR mode:

| Parameter | Description | Range | Default |

|---|---|---|---|

| intra_period | I-frame interval | [0,2047] | 28 |

| intra_qp | QP value for I-frames | [0,51] | 0 |

| bit_rate | Target average bitrate, in kbps | [0,700000] | 0 |

| frame_rate | Target frame rate, in fps | [1,240] | 30 |

| initial_rc_qp | Initial QP value for rate control; if outside [0,51], the encoder internally determines the initial value | [0,63] | 63 |

| vbv_buffer_size | VBV buffer size in ms; actual VBV buffer size = bit_rate * vbv_buffer_size / 1000 (kb). Buffer size affects encoding quality and bitrate control accuracy: smaller buffers yield higher bitrate control precision but lower image quality; larger buffers improve image quality but increase bitrate fluctuation. | [10,3000] | 10 |

| ctu_level_rc_enable | H.264/H.265 rate control can operate at CTU level for higher bitrate control precision at the cost of encoding quality. This mode cannot be used with ROI encoding; it is automatically disabled when ROI encoding is enabled. | [0,1] | 0 |

| min_qp_I | Minimum QP value for I-frames | [0,51] | 8 |

| max_qp_I | Maximum QP value for I-frames | [0,51] | 51 |

| min_qp_P | Minimum QP value for P-frames | [0,51] | 8 |

| max_qp_P | Maximum QP value for P-frames | [0,51] | 51 |

| min_qp_B | Minimum QP value for B-frames | [0,51] | 8 |

| max_qp_B | Maximum QP value for B-frames | [0,51] | 51 |

| hvs_qp_enable | H.264/H.265 rate control can operate at sub-CTU level, adjusting sub-macroblock QP values to improve subjective image quality. | [0,1] | 1 |

| hvs_qp_scale | Effective when hvs_qp_enable is enabled; represents QP scaling factor. | [0,4] | 2 |

| max_delta_qp | Effective when hvs_qp_enable is enabled; specifies maximum allowable deviation for HVS QP values. | [0,51] | 10 |

| qp_map_enable | Enables QP map for ROI encoding. | [0,1] | 0 |

VBR Description

VBR stands for Variable Bitrate, allocating higher QP (lower quality, higher compression) for simple scenes and lower QP (higher quality) for complex scenes to maintain consistent visual quality. Below are parameter descriptions for VBR mode:

| Parameter | Description | Range | Default |

|---|---|---|---|

| intra_period | I-frame interval | [0,2047] | 28 |

| intra_qp | QP value for I-frames | [0,51] | 0 |

| frame_rate | Target frame rate, in fps | [1,240] | 0 |

| qp_map_enable | Enables QP map for ROI encoding | [0,1] | 0 |

AVBR Description

AVBR stands for Average Variable Bitrate, allocating lower bitrates for simple scenes and sufficient bitrates for complex scenes—similar to VBR—while maintaining an average bitrate close to the target over time, thus controlling output file size—similar to CBR. It is a compromise between CBR and VBR, producing relatively stable bitrate and image quality. Below are parameter descriptions for AVBR mode:

| Parameter | Description | Range | Default |

|---|---|---|---|

| intra_period | I-frame interval | [0,2047] | 28 |

| intra_qp | QP value for I-frames | [0,51] | 0 |

| bit_rate | Target average bitrate, in kbps | [0,700000] | 0 |

| frame_rate | Target frame rate, in fps | [1,240] | 30 |

| initial_rc_qp | Initial QP value for rate control; if outside [0,51], the encoder internally determines the initial value | [0,63] | 63 |

| vbv_buffer_size | VBV buffer size in ms; actual VBV buffer size = bit_rate * vbv_buffer_size / 1000 (kb). Buffer size affects encoding quality and bitrate control accuracy: smaller buffers yield higher bitrate control precision but lower image quality; larger buffers improve image quality but increase bitrate fluctuation. | [10,3000] | 3000 |

| ctu_level_rc_enable | H.264/H.265 rate control can operate at CTU level for higher bitrate control precision at the cost of encoding quality. This mode cannot be used with ROI encoding; it is automatically disabled when ROI encoding is enabled. | [0,1] | 0 |

| min_qp_I | Minimum QP value for I-frames | [0,51] | 8 |

| max_qp_I | Maximum QP value for I-frames | [0,51] | 51 |

| min_qp_P | Minimum QP value for P-frames | [0,51] | 8 |

| max_qp_P | Maximum QP value for P-frames | [0,51] | 51 |

| min_qp_B | Minimum QP value for B-frames | [0,51] | 8 |

| max_qp_B | Maximum QP value for B-frames | [0,51] | 51 |

| hvs_qp_enable | H.264/H.265 rate control can operate at sub-CTU level, adjusting sub-macroblock QP values to improve subjective image quality. | [0,1] | 1 |

| hvs_qp_scale | Effective when hvs_qp_enable is enabled; represents QP scaling factor. | [0,4] | 2 |

| max_delta_qp | Effective when hvs_qp_enable is enabled; specifies maximum allowable deviation for HVS QP values. | [0,51] | 10 |

| qp_map_enable | Enables QP map for ROI encoding. | [0,1] | 0 |

FixQp Description

FixQp assigns fixed QP values to each I-frame and P-frame, with separate values allowed for I/P frames. Below are parameter descriptions for FixQp mode:

| Parameter | Description | Range | Default |

|---|---|---|---|

| intra_period | I-frame interval | [0,2047] | 28 |

| frame_rate | Target frame rate, in fps | [1,240] | 30 |

| force_qp_I | Forced QP value for I-frames | [0,51] | 0 |

| force_qp_P | Forced QP value for P-frames | [0,51] | 0 |

| force_qp_B | Forced QP value for B-frames | [0,51] | 0 |

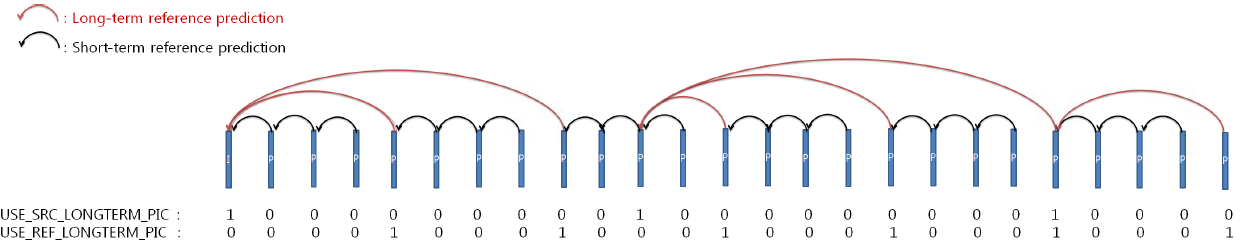

QPMAP Description

QPMAP allows specifying QP values for each block within a frame: 32x32 for H.265 and 16x16 for H.264. Below are parameter descriptions for QPMAP mode:

| Parameter | Description | Range | Default |

|---|---|---|---|

| intra_period | I-frame interval | [0,2047] | 28 |

| frame_rate | Target frame rate, in fps | [1,240] | 30 |

| qp_map_array | Specifies QP map table; for H.265, sub-CTU size is 32x32—each sub-CTU requires one QP value (1 byte), ordered in raster scan. | Pointer address | NULL |

| qp_map_array_count | Specifies size of QP map table. | [0, MC_VIDEO_MAX_SUB_CTU_NUM] && (ALIGN64(picWidth)>>5) * (ALIGN64(picHeight)>>5) | 0 |

Debugging Methods

Encoding Quality Tuning

In current customer usage scenarios involving video encoding with the codec, CBR mode is commonly selected. In complex scenes, hardware automatically increases bitrate to maintain video quality, resulting in larger-than-expected output files. To balance video quality and actual bitrate, settings for bit_rate and max_qp_I/P must be coordinated. Below shows actual bitrate and QP under all-I-frame mode with a target bitrate of 15000 kbps across different scene complexities and max_qp_I values (data due to varying scene complexity):

| Scene & Parameters | Outdoor Daytime Complex Scene bitrate(15000) max_qp_I(35) | Outdoor Daytime Complex Scene bitrate(15000) max_qp_I(38) | Outdoor Daytime Complex Scene bitrate(15000) max_qp_I(39) |

|---|---|---|---|

| Bit allocation (bps) (higher = better quality) | 60300045 | 42186920 | 35898230 |

| Avg QP (lower = better quality) | 35 | 38 | 39 |

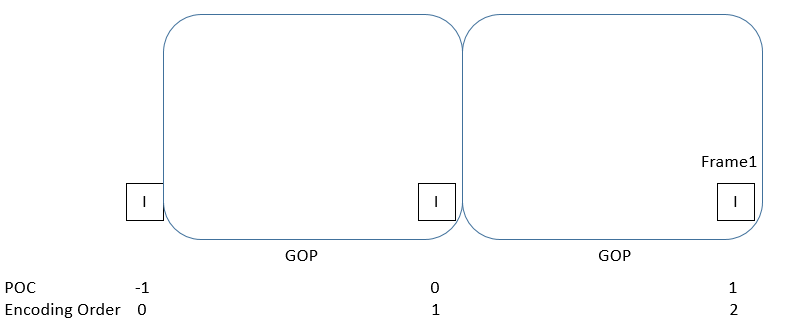

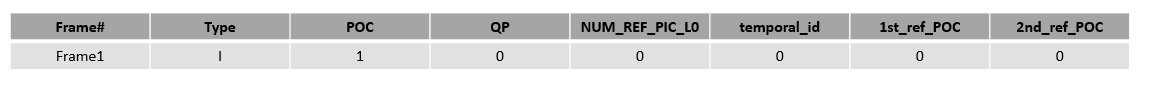

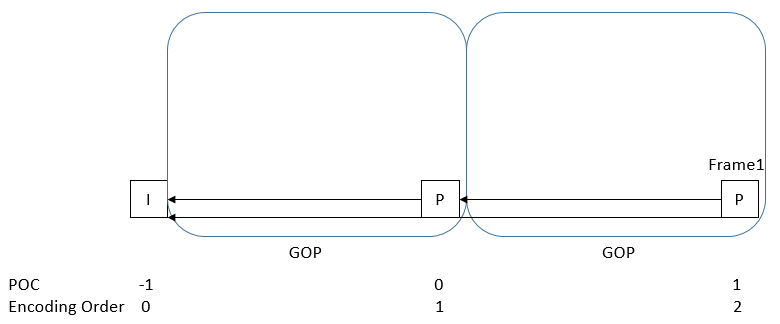

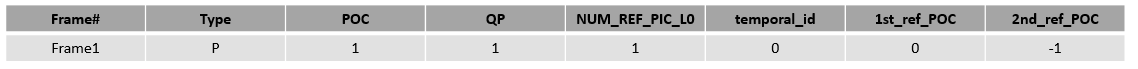

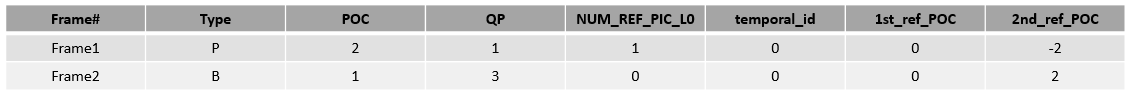

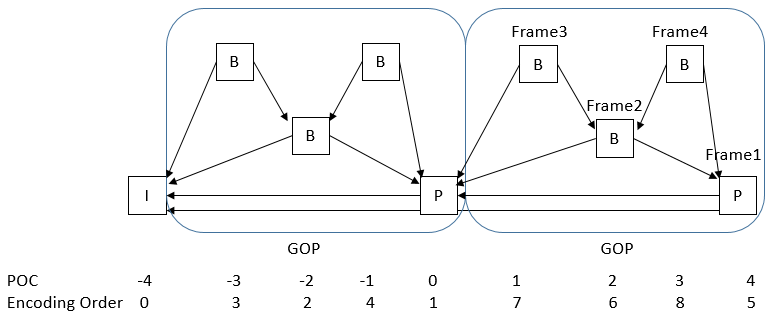

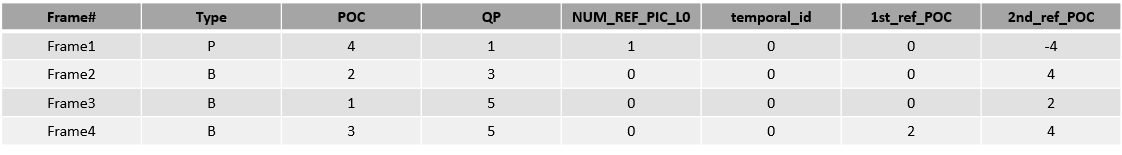

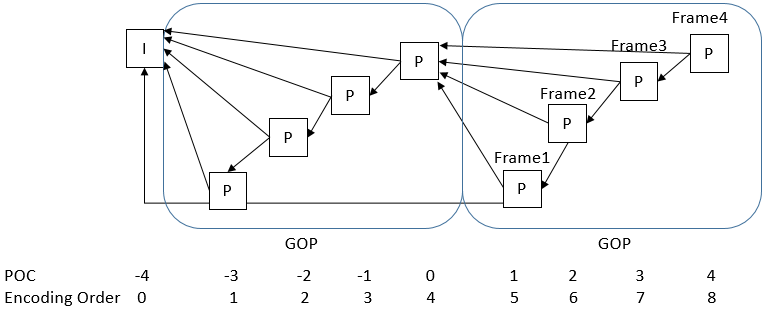

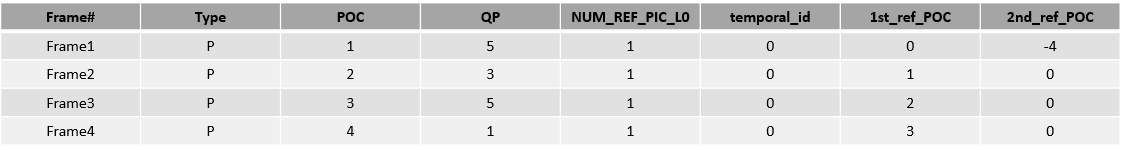

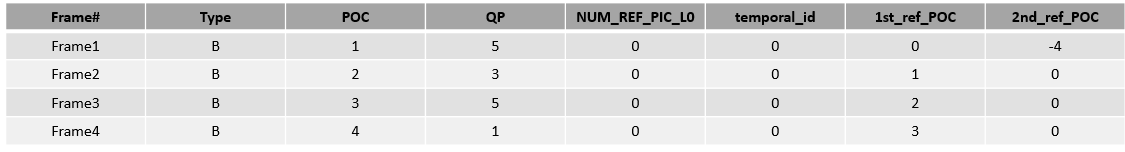

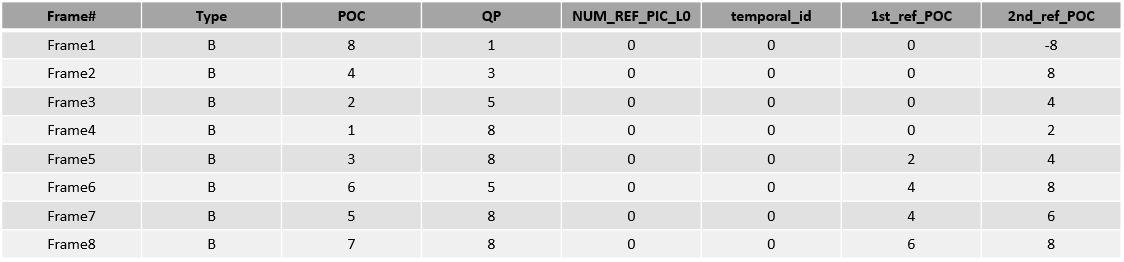

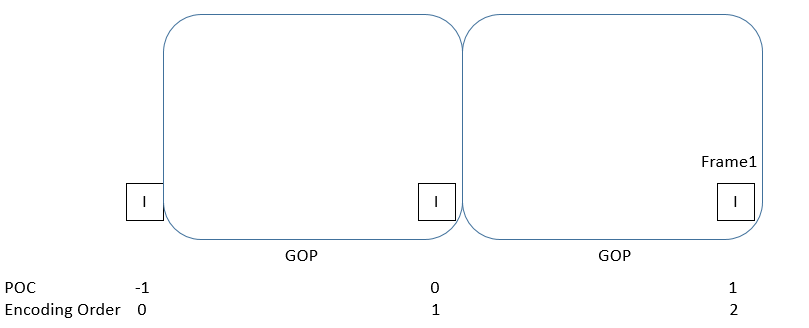

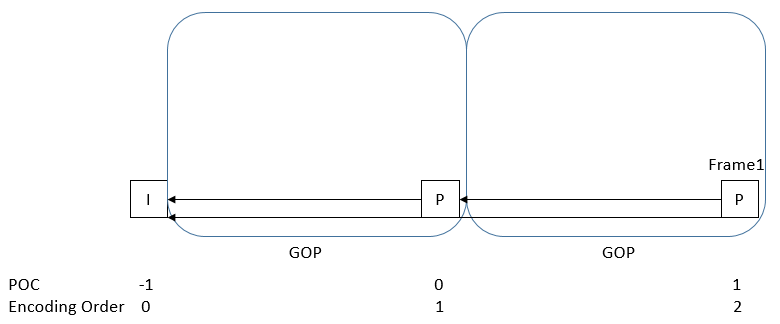

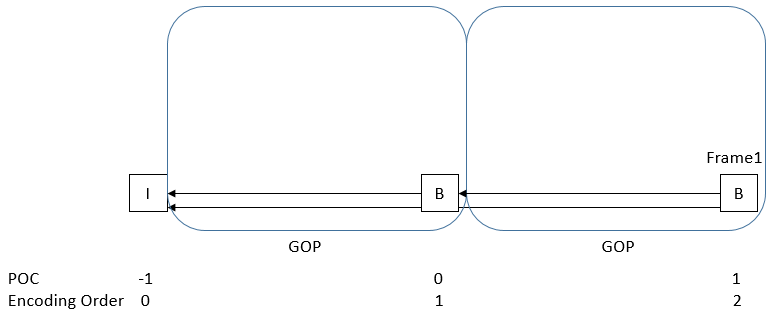

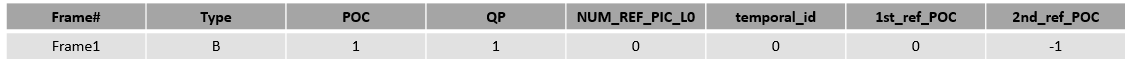

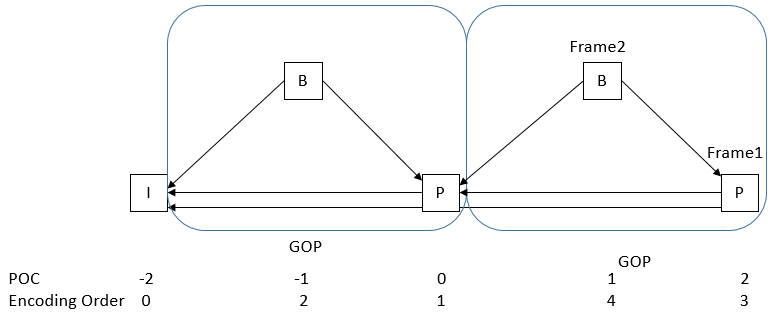

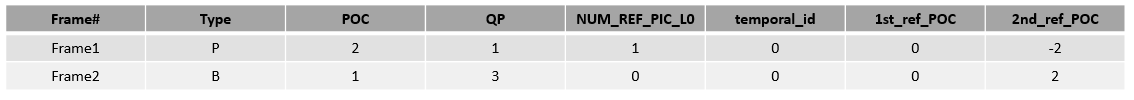

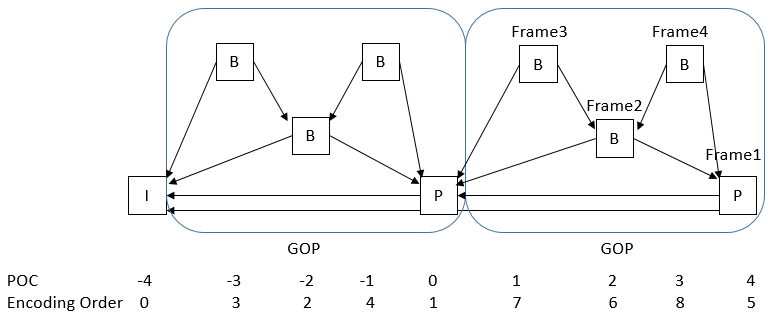

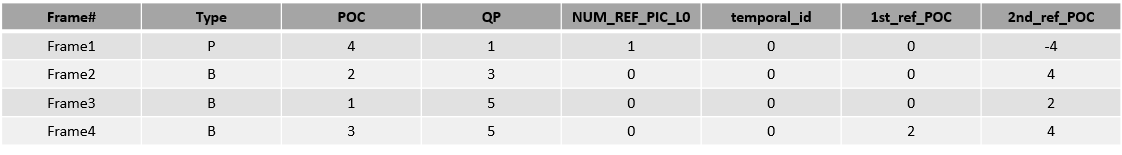

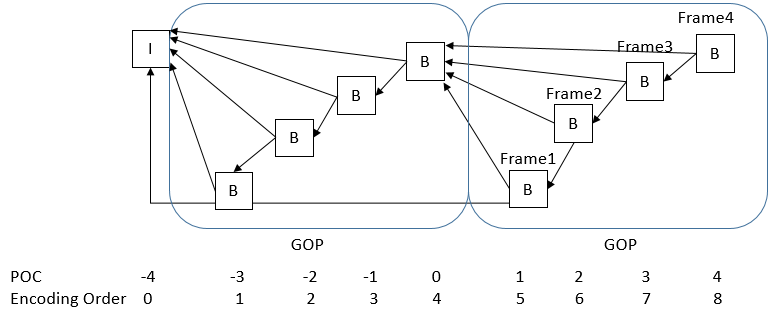

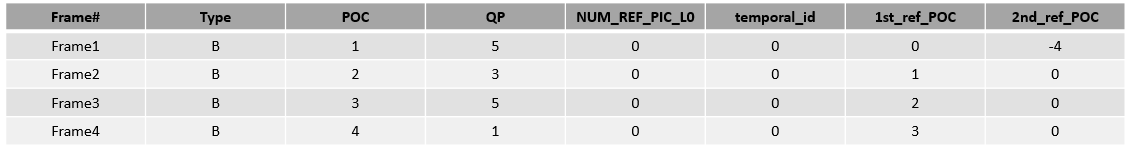

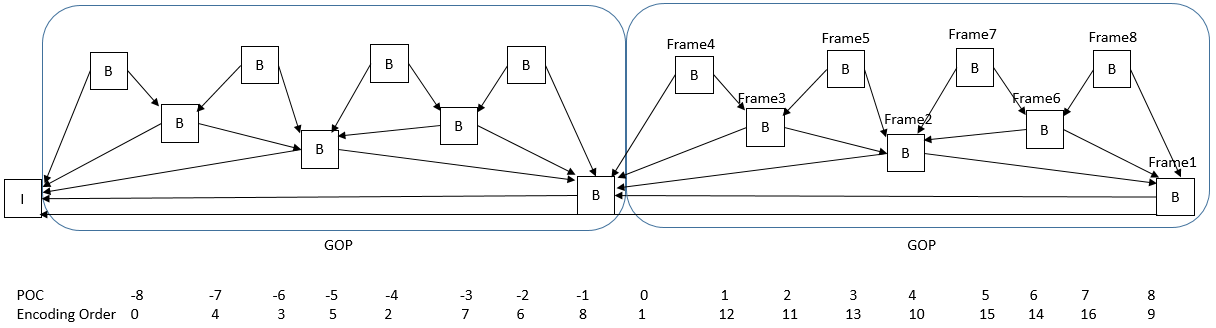

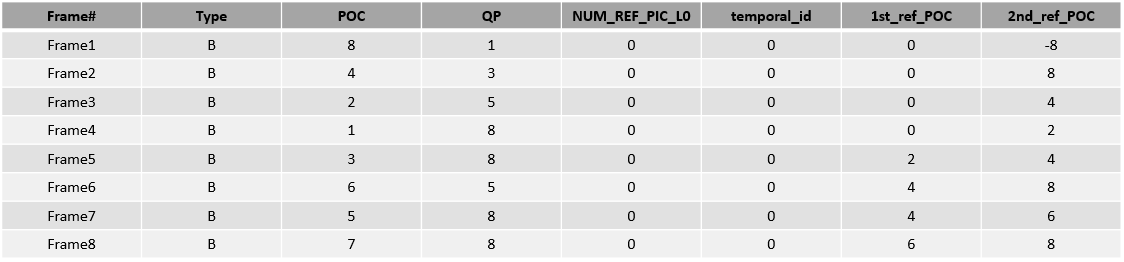

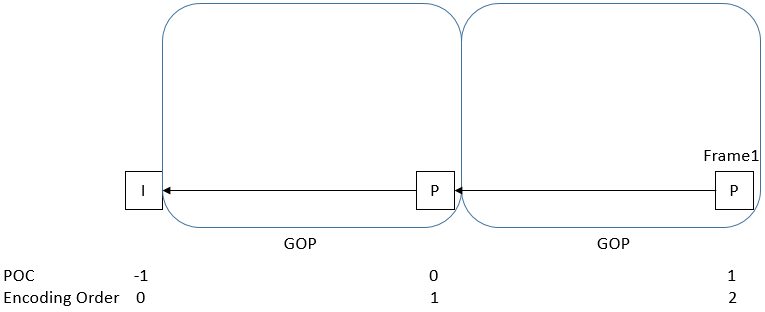

GOP Structure Description

H.264/H.265 encoding supports configurable GOP structures, allowing users to select from three preset GOP structures or define custom GOP structures.

A GOP structure table defines a periodic GOP pattern applied throughout the encoding process. Elements within a single structure table are described below. Reference frames for each picture can be specified; if frames after an IDR reference frames before the IDR, the encoder internally handles this to prevent cross-IDR referencing—users need not manage this. When defining custom GOP structures, users must specify the number of structure tables (up to 3), ordered by decoding sequence.

Below describes elements within the structure table:

| Element | Description |

|---|---|

| Type | Frame type (I, P, B) |

| POC | Display order within GOP, range [1, gop_size]. |

| QPoffset | Quantization parameter for pictures in custom GOP |

| NUM_REF_PIC_L0 | Indicates multi-reference pictures for P-frames; valid only when PIC_TYPE is P. |

| temporal_id | Temporal layer of frame; frames cannot predict from frames with higher temporal_id (0~6). |

| 1st_ref_POC | POC of first reference picture in L0 |

| 2nd_ref_POC | For B-frames, first reference picture POC is in L1; for P-frames, second reference picture POC is in L0. reference_L1 and reference_L0 may share the same POC in B-slices, but different POCs are recommended for compression efficiency. |

Preset GOP Structures

Nine preset GOP structures are supported:

| Index | GOP Structure | Low Latency (encoding order = display order) | GOP Size | Encoding Order | Min Source Frame Buffer Count | Min Decoded Picture Buffer Count | Period Requirement (I-frame interval) |

|---|---|---|---|---|---|---|---|

| 1 | I | Yes | 1 | I0-I1-I2… | 1 | 1 | |

| 2 | P | Yes | 1 | P0-P1-P2… | 1 | 2 | |

| 3 | B | Yes | 1 | B0-B1-B2… | 1 | 3 | |

| 4 | BP | No | 2 | B1-P0-B3-P2… | 1 | 3 | |

| 5 | BBBP | Yes | 1 | B2-B1-B3-P0… | 7 | 4 | |

| 6 | PPPP | Yes | 4 | P0-P1-P2-P3… | 1 | 2 | |

| 7 | BBBB | Yes | 4 | B0-B1-B2-B3… | 1 | 3 | |

| 8 | BBBB BBBB | Yes | 1 | B3-B2-B4-B1-B6-B5-B7-B0… | 12 | 5 | |

| 9 | P | Yes | 1 | P0 | 1 | 2 |

GOP Preset 1

- Contains only I-frames with no inter-frame referencing;

- Low latency;

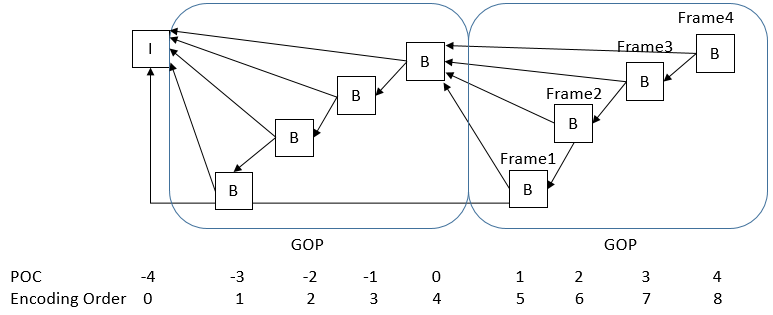

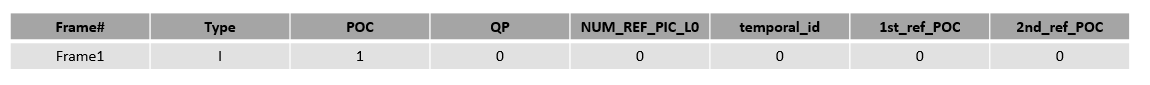

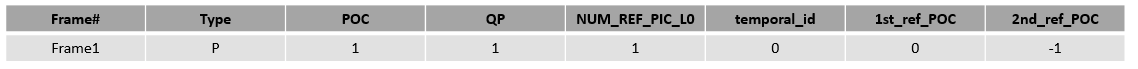

GOP Preset 2

GOP Preset 2

- Contains only I-frames and P-frames;

- P-frames reference 2 forward reference frames;

- Low latency;

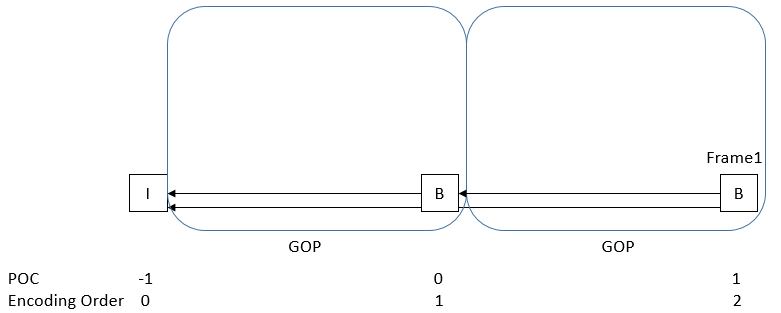

GOP Preset 3

- Contains only I-frames and B-frames;

- B-frames reference 2 forward reference frames;

- Low latency;

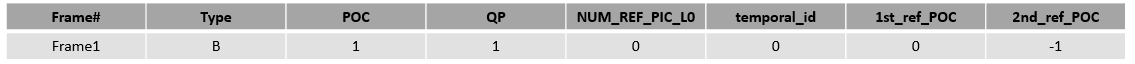

GOP Preset 4

- Contains only I-frames, P-frames, and B-frames;

- P-frames reference 2 forward reference frames;

- B-frames reference 1 forward reference frame and 1 backward reference frame;

GOP Preset 5

- Contains only I-frames, P-frames, and B-frames;

- P-frames reference 2 forward reference frames;

- B-frames reference 1 forward reference frame and 1 backward reference frame, where the backward reference frame can be either a P-frame or a B-frame;

GOP Preset 6

- Contains only I-frames and P-frames;

- P-frames reference 2 forward reference frames;

- Low latency;

GOP Preset 7

- Contains only I-frames and B-frames;

- B-frames reference 2 forward reference frames;

- Low latency;

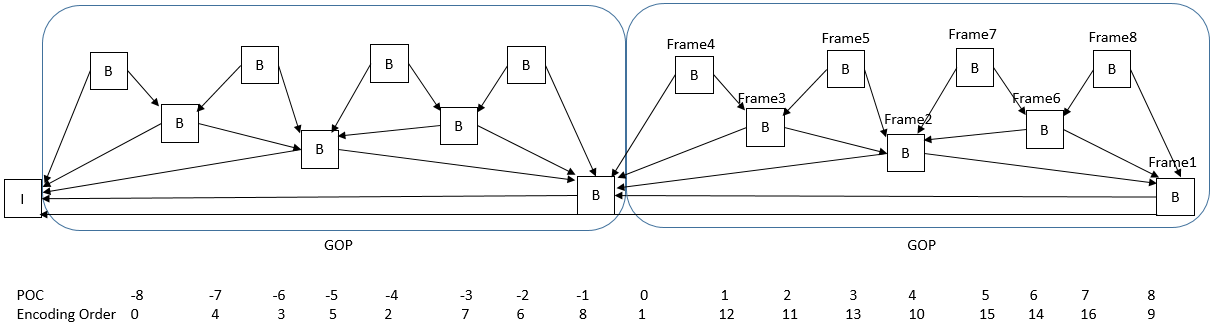

GOP Preset 8

- Contains only I-frames and B-frames;

- B-frames reference 1 forward reference frame and 1 backward reference frame;

GOP Preset 9

- Contains only I-frames and P-frames;

- P-frames reference 1 forward reference frame;

- Low latency;

VPU Debugging Method

The VPU (Video Processing Unit) is a dedicated visual processing unit capable of efficiently handling video content. The VPU can perform encoding and decoding of H.265 video formats. Users can obtain the encoded/decoded bitstream through the interfaces provided by Codec.

Encoding Status

Encoding Debug Information

cat /sys/kernel/debug/vpu/venc

root@ubuntu:~# cat /sys/kernel/debug/vpu/venc

----encode enc param----

enc_idx enc_id profile level width height pix_fmt fbuf_count extern_buf_flag bsbuf_count bsbuf_size mirror rotate

0 h265 Main unspecified 4096 2160 0 5 1 5 13271040 0 0

----encode h265cbr param----

enc_idx rc_mode intra_period intra_qp bit_rate frame_rate initial_rc_qp vbv_buffer_size ctu_level_rc_enalbe min_qp_I max_qp_I min_qp_P max_qp_P min_qp_B max_qp_B hvs_qp_enable hvs_qp_scale qp_map_enable max_delta_qp

0 h265cbr 20 30 5000 30 30 3000 1 8 50 8 50 8 50 1 2 0 10

----encode gop param----

enc_idx enc_id gop_preset_idx custom_gop_size decoding_refresh_type

0 h265 2 0 2

----encode intra refresh----

enc_idx enc_id intra_refresh_mode intra_refresh_arg

0 h265 0 0

----encode longterm ref----

enc_idx enc_id use_longterm longterm_pic_period longterm_pic_using_period

0 h265 0 0 0

----encode roi_params----

enc_idx enc_id roi_enable roi_map_array_count

0 h265 0 0

----encode mode_decision 1----

enc_idx enc_id mode_decision_enable pu04_delta_rate pu08_delta_rate pu16_delta_rate pu32_delta_rate pu04_intra_planar_delta_rate pu04_intra_dc_delta_rate pu04_intra_angle_delta_rate pu08_intra_planar_delta_rate pu08_intra_dc_delta_rate pu08_intra_angle_delta_rate pu16_intra_planar_delta_rate pu16_intra_dc_delta_rate pu16_intra_angle_delta_rate

0 h265 0 0 0 0 0 0 0 0 0 0 0 0 0 0

----encode mode_decision 2----

enc_idx enc_id pu32_intra_planar_delta_rate pu32_intra_dc_delta_rate pu32_intra_angle_delta_rate cu08_intra_delta_rate cu08_inter_delta_rate cu08_merge_delta_rate cu16_intra_delta_rate cu16_inter_delta_rate cu16_merge_delta_rate cu32_intra_delta_rate cu32_inter_delta_rate cu32_merge_delta_rate

0 h265 0 0 0 0 0 0 0 0 0 0 0 0

----encode h265_transform----

enc_idx enc_id chroma_cb_qp_offset chroma_cr_qp_offset user_scaling_list_enable

0 h265 0 0 0

----encode h265_pred_unit----

enc_idx enc_id intra_nxn_enable constrained_intra_pred_flag strong_intra_smoothing_enabled_flag max_num_merge

0 h265 1 0 1 2

----encode h265 timing----

enc_idx enc_id vui_num_units_in_tick vui_time_scale vui_num_ticks_poc_diff_one_minus1

0 h265 1000 30000 0

----encode h265 slice params----

enc_idx enc_id h265_independent_slice_mode h265_independent_slice_arg h265_dependent_slice_mode h265_dependent_slice_arg

0 h265 0 0 0 0

----encode h265 deblk filter----

enc_idx enc_id slice_deblocking_filter_disabled_flag slice_beta_offset_div2 slice_tc_offset_div2 slice_loop_filter_across_slices_enabled_flag

0 h265 0 0 0 1

----encode h265 sao param----

enc_idx enc_id sample_adaptive_offset_enabled_flag

0 h265 1

----encode status----

enc_idx enc_id cur_input_buf_cnt cur_output_buf_cnt left_recv_frame left_enc_frame total_input_buf_cnt total_output_buf_cnt fps

0 h265 4 1 0 0 1093 1089 35

Parameter Explanation

| Debug Info Group | Status Parameter | Description |

|---|---|---|

| encode enc param | Basic Encoding Parameters | enc_idx: Encoding instance index enc_id: Encoding type profile: Profile type level: H.265 level type width: Encoding width height: Encoding height pix_fmt: Input frame pixel format fbuf_count: Number of input frame buffers extern_buf_flag: Whether external input buffers are used bsbuf_count: Number of bitstream buffers bsbuf_size: Bitstream buffer size mirror: Whether mirroring is enabled rotate: Whether rotation is enabled |

| encode h265cbr param | CBR Rate Control Parameters | enc_idx: Encoding instance index rc_mode: Rate control mode intra_period: I-frame interval intra_qp: QP value for I-frames bit_rate: Bitrate frame_rate: Frame rate initial_rc_qp: Initial QP value vbv_buffer_size: VBV buffer size ctu_level_rc_enalbe: Whether rate control operates at CTU level min_qp_I: Minimum QP for I-frames max_qp_I: Maximum QP for I-frames min_qp_P: Minimum QP for P-frames max_qp_P: Maximum QP for P-frames min_qp_B: Minimum QP for B-frames max_qp_B: Maximum QP for B-frames hvs_qp_enable: Whether rate control operates at sub-CTU level hvs_qp_scale: QP scaling factor qp_map_enable: Enable QP map for ROI encoding max_delta_qp: Maximum allowable deviation for HVS QP values |

| encode gop param | GOP Parameters | enc_idx: Encoding instance index enc_id: Encoding type gop_preset_idx: Selected preset GOP structure custom_gop_size: GOP size when using custom GOP decoding_refresh_type: Specific type of IDR frame |

| encode intra refresh | Intra Refresh Parameters | enc_idx: Encoding instance index enc_id: Encoding type intra_refresh_mode: Intra refresh mode intra_refresh_arg: Intra refresh argument |

| encode longterm ref | Long-Term Reference Parameters | enc_idx: Encoding instance index enc_id: Encoding type use_longterm: Enable long-term reference frames longterm_pic_period: Period for long-term reference frames longterm_pic_using_period: Period for referencing long-term reference frames |

| encode roi_params | ROI Parameters | enc_idx: Encoding instance index enc_id: Encoding type roi_enable: Enable ROI encoding roi_map_array_count: Number of elements in ROI map |

| encode mode_decision 1 | Block Mode Decision Parameters 1 | Various mode selection parameter values, including pu04_delta_rate, pu08_delta_rate, etc. |

| encode mode_decision 2 | Block Mode Decision Parameters 2 | Various mode selection parameter values, including pu32_intra_planar_delta_rate, pu32_intra_dc_delta_rate, etc. |

| encode h265_transform | Transform Parameters | enc_idx: Encoding instance index enc_id: Encoding type chroma_cb_qp_offset: QP offset for Cb component chroma_cr_qp_offset: QP offset for Cr component user_scaling_list_enable: Enable user-defined scaling list |

| encode h265_pred_unit | Prediction Unit Parameters | enc_idx: Encoding instance index enc_id: Encoding type intra_nxn_enable: Enable intra NXN PUs constrained_intra_pred_flag: Whether intra prediction is constrained strong_intra_smoothing_enabled_flag: Whether bidirectional linear interpolation is used in filtering max_num_merge: Number of merge candidates |

| encode h265 timing | Timing Parameters | enc_idx: Encoding instance index enc_id: Encoding type vui_num_units_in_tick: Number of time units per tick vui_time_scale: Number of time units per second vui_num_ticks_poc_diff_one_minus1: Number of clock ticks corresponding to a picture order count difference of 1 |

| encode h265 slice params | Slice Parameters | enc_idx: Encoding instance index enc_id: Encoding type h265_independent_slice_mode: Independent slice encoding mode h265_independent_slice_arg: Size of independent slices h265_dependent_slice_mode: Dependent slice encoding mode h265_dependent_slice_arg: Size of dependent slices |

| encode h265 deblk filter | Deblocking Filter Parameters | enc_idx: Encoding instance index enc_id: Encoding type slice_deblocking_filter_disabled_flag: Whether in-slice deblocking filtering is disabled slice_beta_offset_div2: β deblocking parameter offset for current slice slice_tc_offset_div2: tC deblocking parameter offset for current slice slice_loop_filter_across_slices_enabled_flag: Whether cross-slice boundary filtering is enabled |

| encode h265 sao param | SAO Parameters | enc_idx: Encoding instance index enc_id: Encoding type sample_adaptive_offset_enabled_flag: Whether sample adaptive offset is applied to reconstructed pictures after deblocking |

| encode status | Current Encoding Status Parameters | enc_idx: Encoding instance index enc_id: Encoding type cur_input_buf_cnt: Number of currently used input buffers cur_output_buf_cnt: Number of currently used output buffers left_recv_frame: Remaining frames to receive (valid only when receive_frame_number is set) left_enc_frame: Remaining frames to encode (valid only when receive_frame_number is set) total_input_buf_cnt: Total number of input buffers used so far total_output_buf_cnt: Total number of output buffers used so far fps: Current frame rate |

Decoding Status

Decoding Debug Information

cat /sys/kernel/debug/vpu/vdec

root@ubuntu:~# cat /sys/kernel/debug/vpu/vdec

----decode param----

dec_idx dec_id feed_mode pix_fmt bitstream_buf_size bitstream_buf_count frame_buf_count

0 h265 1 0 13271040 6 6

----h265 decode param----

dec_idx dec_id reorder_enable skip_mode bandwidth_Opt cra_as_bla dec_temporal_id_mode target_dec_temporal_id_plus1

0 h265 1 0 1 0 0 0

----decode frameinfo----

dec_idx dec_id display_width display_height

0 h265 4096 2160

----decode status----

dec_idx dec_id cur_input_buf_cnt cur_output_buf_cnt total_input_buf_cnt total_output_buf_cnt fps

0 h265 5 1 458 453 53

Parameter Explanation

| Debug Info Group | Status Parameter | Description |

|---|---|---|

| decode param | Basic Decoding Parameters | dec_idx: Decoding instance index dec_id: Decoding type feed_mode: Data feeding mode pix_fmt: Output pixel format bitstream_buf_size: Input bitstream buffer size bitstream_buf_count: Number of input bitstream buffers frame_buf_count: Number of output frame buffers |

| h265 decode param | H.265 Decoding Basic Parameters | dec_idx: Decoding instance index dec_id: Decoding type reorder_enable: Enable decoder to output frames in display order skip_mode: Enable frame decode skip mode bandwidth_Opt: Enable bandwidth optimization mode cra_as_bla: Treat CRA as BLA dec_temporal_id_mode: Temporal ID selection mode target_dec_temporal_id_plus1: Specified temporal ID value |

| decode frameinfo | Decoded Output Frame Info | dec_idx: Decoding instance index dec_id: Decoding type display_width: Display width display_height: Display height |

| decode status | Current Decoding Status Parameters | dec_idx: Decoding instance index dec_id: Decoding type cur_input_buf_cnt: Number of currently used input buffers cur_output_buf_cnt: Number of currently used output buffers total_input_buf_cnt: Total number of input buffers used so far total_output_buf_cnt: Total number of output buffers used so far fps: Current frame rate |

JPU Debugging Method

The JPU (JPEG Processing Unit) is primarily used to perform JPEG/MJPEG encoding and decoding. Users can input YUV data for encoding or JPEG images for decoding via the CODEC interface, and obtain the encoded JPEG images or decoded YUV data after JPU processing.

Encoding Status

Encoding Debug Information

cat /sys/kernel/debug/jpu/jenc

root@ubuntu:~# cat /sys/kernel/debug/jpu/jenc

----encode param----

enc_idx enc_id width height pix_fmt fbuf_count extern_buf_flag bsbuf_count bsbuf_size mirror rotate

0 jpeg 1920 1088 1 5 0 5 3137536 0 0

----encode rc param----

enc_idx rc_mode frame_rate quality_factor

0 noratecontrol 0 0

----encode status----

enc_idx enc_id cur_input_buf_cnt cur_output_buf_cnt left_recv_frame left_enc_frame total_input_buf_cnt total_output_buf_cnt fps

0 jpeg 4 1 0 0 4344 4340 287

Parameter Explanation

| Debug Info Group | Status Parameter | Description |

|---|---|---|

| encode param | Basic Encoding Parameters | enc_idx: Encoding instance enc_id: Encoding type width: Image width height: Image height pix_fmt: Pixel format fbuf_count: Number of input framebuffer buffers extern_buf_flag: Whether user-allocated input buffers are used bsbuf_count: Number of output bitstream buffers bsbuf_size: Size of output bitstream buffer mirror: Whether mirroring is enabled rotate: Whether rotation is enabled |

| encode rc param | MJPEG Rate Control Parameters | enc_idx: Encoding instance rc_mode: Rate control mode frame_rate: Target frame rate quality_factor: Quantization factor |

| encode status | Current Encoding Status Parameters | enc_idx: Encoding instance ID enc_id: Encoding type cur_input_buf_cnt: Number of currently used input buffers cur_output_buf_cnt: Number of currently used output buffers left_recv_frame: Remaining frames to receive (valid only if receive_frame_number is set) left_enc_frame: Remaining frames to encode (valid only if receive_frame_number is set) total_input_buf_cnt: Total number of input buffers used so far total_output_buf_cnt: Total number of output buffers used so far fps: Current frame rate |

Decoding Status

Decoding Debug Information

cat /sys/kernel/debug/jpu/jdec

root@ubuntu:~# cat /sys/kernel/debug/jpu/jdec

----decode param----

dec_idx dec_id feed_mode pix_fmt bitstream_buf_size bitstream_buf_count frame_buf_count mirror rotate

0 jpeg 1 1 3133440 5 5 0 0

----decode frameinfo----

dec_idx dec_id display_width display_height

0 jpeg 1920 1088

----decode status----

dec_idx dec_id cur_input_buf_cnt cur_output_buf_cnt total_input_buf_cnt total_output_buf_cnt fps

0 jpeg 0 1 3779 3779 264

Parameter Explanation

| Debug Info Group | Status Parameter | Description |

|---|---|---|

| decode param | Basic Decoding Parameters | dec_idx: Decoding instance dec_id: Decoding type feed_mode: Feed mode pix_fmt: Pixel format bitstream_buf_size: Size of input bitstream buffer bitstream_buf_count: Number of input bitstream buffers frame_buf_count: Number of output framebuffer buffers mirror: Whether mirroring is enabled rotate: Whether rotation is enabled |

| decode frameinfo | Decoded Frame Info | dec_idx: Decoding instance ID dec_id: Decoding type display_width: Display width display_height: Display height |

| decode status | Current Decoding Status Parameters | dec_idx: Decoding instance ID dec_id: Decoding type cur_input_buf_cnt: Number of currently used input buffers cur_output_buf_cnt: Number of currently used output buffers total_input_buf_cnt: Total number of input buffers used so far total_output_buf_cnt: Total number of output buffers used so far fps: Current frame rate |

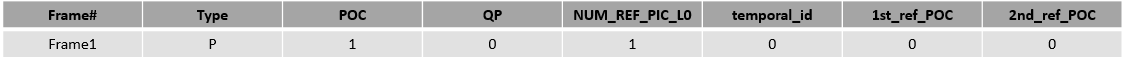

Typical Scenarios

Single-Stream Encoding

The single-stream encoding scenario is illustrated below. Scenario 0 is a simple case: YUV video/image files are read from eMMC, encoded by VPU hardware into H.26x bitstreams or by JPU hardware into JPEG images, and finally saved back to eMMC as files. Scenario 1 is a more complex pipeline where camera-captured data is encoded, compressed, and either stored or transmitted over network/PCIe.

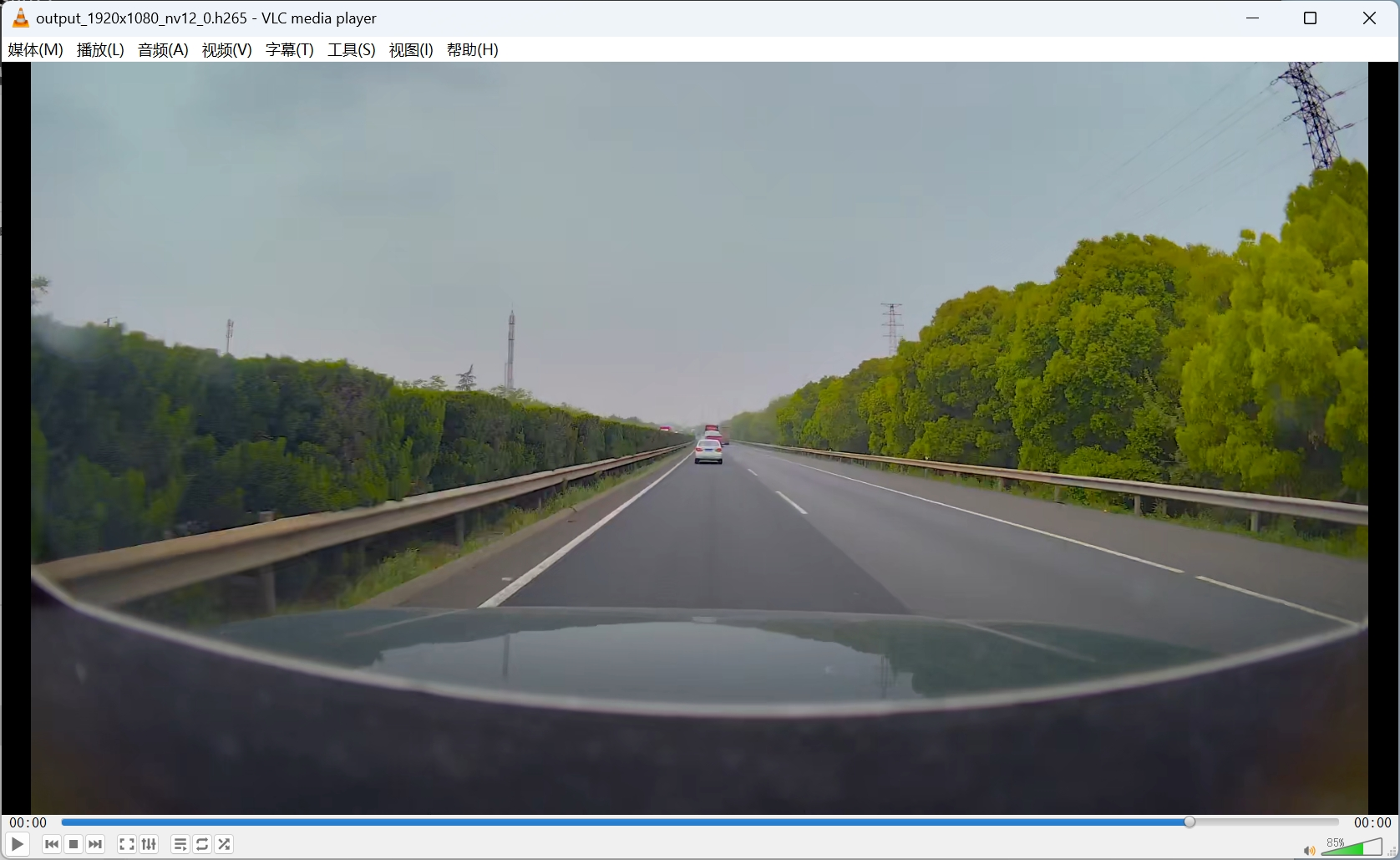

Single-Stream Decoding

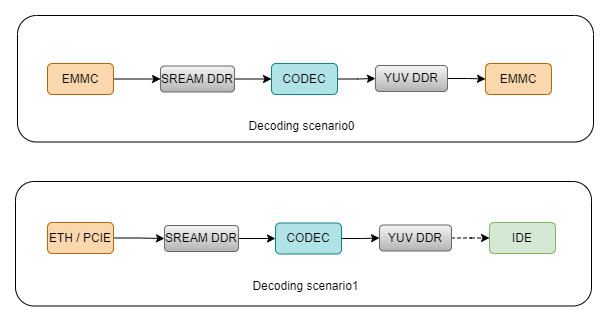

The single-stream decoding scenario is illustrated below. Scenario 0 is a simple case: H.26x bitstreams or JPEG image files are read from eMMC, decoded by VPU or JPU hardware into YUV data, and saved back to eMMC as files. Scenario 1 is a complex pipeline where encoded video or image data is received via network or PCIe, decoded by VPU or JPU hardware, and displayed via IDE.

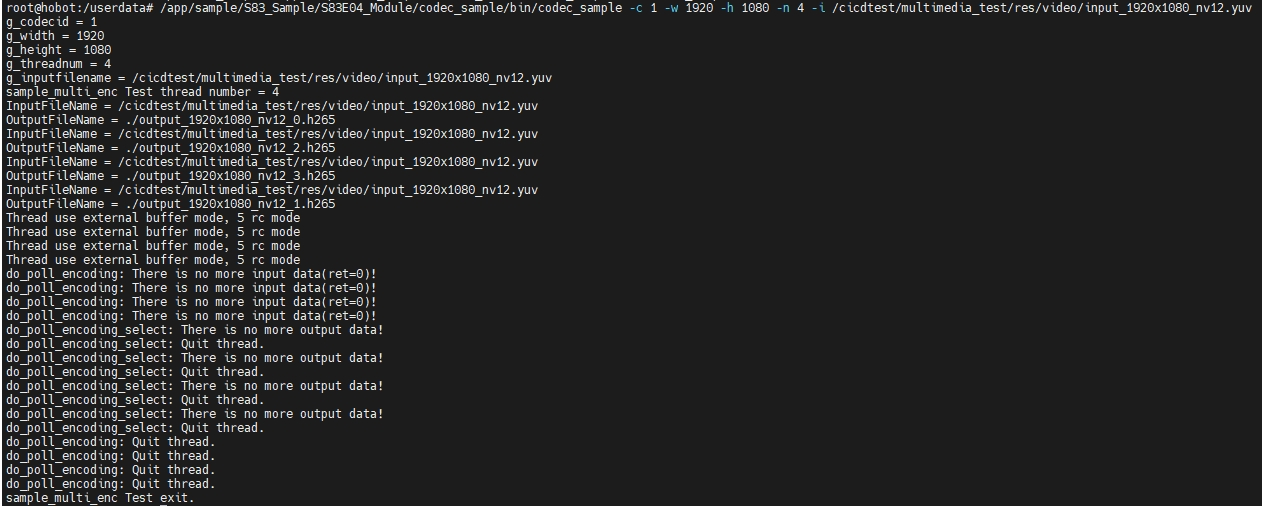

Multi-Stream Encoding

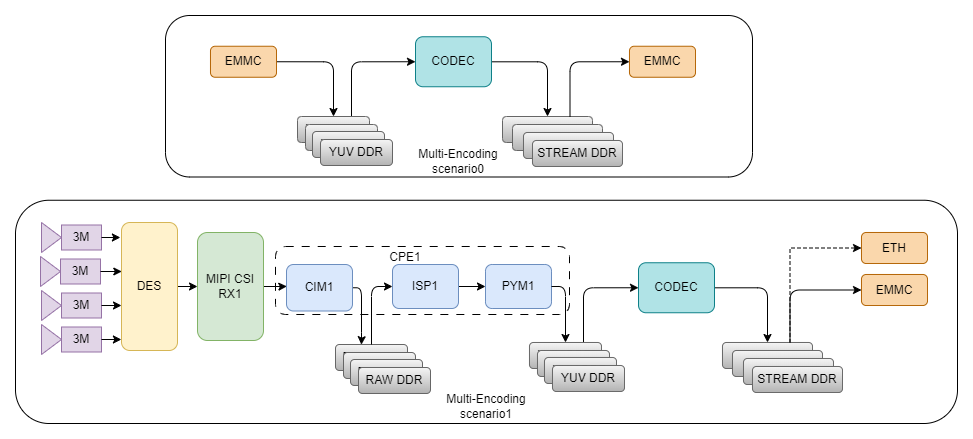

The multi-stream encoding scenario is shown below. Scenario 0 is a simple file-input case. Scenario 1 is a complex pipeline involving multiple modules. Note that in Scenario 1, the capabilities and limitations of all modules in the pipeline must be carefully considered.

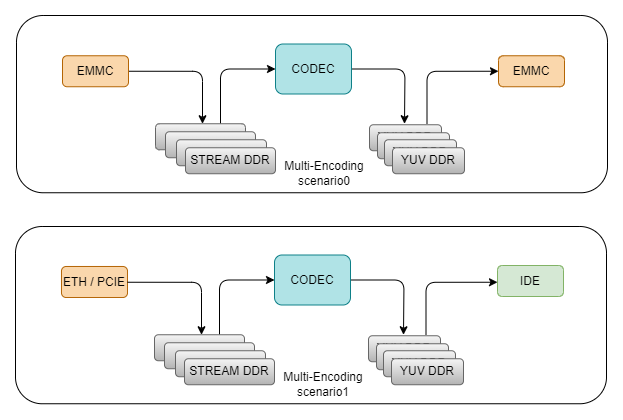

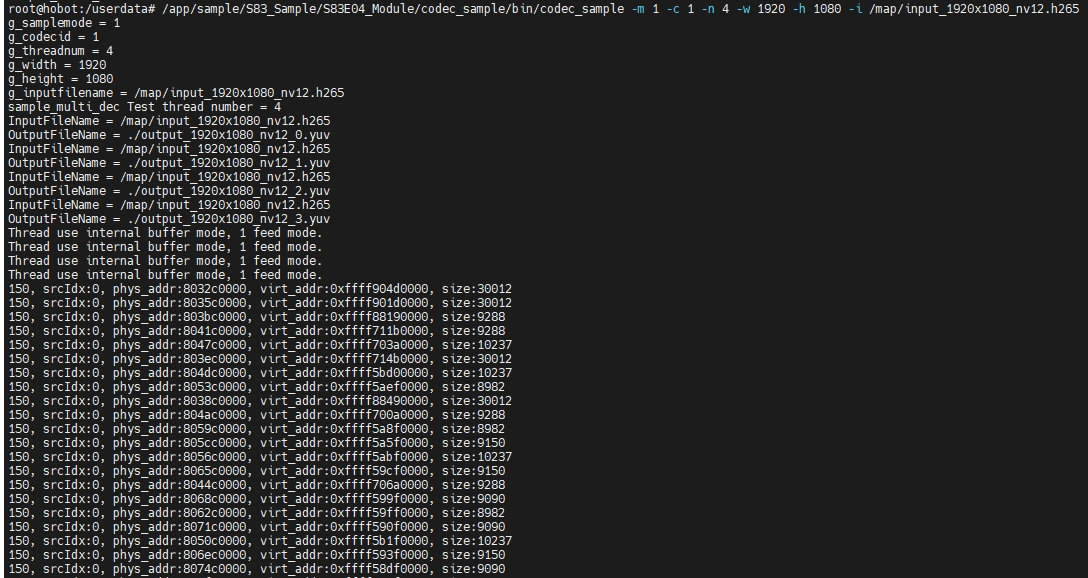

Multi-Stream Decoding

The multi-stream decoding scenario is shown below. Scenario 0 is a simple file-input case. Scenario 1 is a complex pipeline involving multiple modules. Note that in Scenario 1, the capabilities and limitations of all modules in the pipeline must be carefully considered.

Frequently Asked Questions

Configuration and Usage Issues

Video Encoding Performance Specifications

Issue Background: Regarding 4K@30FPS encoding, is it possible to encode into 4 independent 1080p@30fps streams?

Answer: It does not support encoding 4K@30FPS into 4 independent 1080p@30fps streams. The codec supports multi-stream encoding, but it does not support cropping. To achieve 4-channel 1080p encoding, the input stream would need to consist of 4 separate 1080p-sized streams.

GOP Configuration Issues

Issue Background: On the S100, what is the composition of each GOP when publishing H265 frames each time? Are P-frames used? Does each frame support independent decoding? Are SPS and PPS inserted into each frame?

Answer: The GOP structure needs to be configured by the user. It supports both all I-frame and IP-frame modes. If using all IDR frames, independent decoding is supported. This requires configuring an all I-frame GOP structure with Intra period=1; Whether to insert SPS/PPS/VPS into each IDR frame can be selected via the interface hb_mm_mc_request_idr_header. By default, they are added.

How to Insert User Data

Issue Background: During the encoding process, how can user data be inserted via the interface?

Answer:

// The "+" part is information inserted by the user

uint8_t uuid[] = "dc45e9bd-e6d948b7-962cd820-d923eeef+HorizonAI";

hb_u32 length = sizeof(uuid)/sizeof(uuid[0]);

ret = hb_mm_mc_insert_user_data(context, uuid, length);

Frame Information Logging Issue

Issue Background: Printing encoding/decoding information for every frame generates massive logs.

Answer: The encoding/decoding process sets the log level based on LOGLEVEL during initialization. When LOGLEVEL<5 is configured, frame information will not be output.

External Input Buffer Mapping Failure

Issue Background: Users encounter the error "Fail to map phys" when using external input buffer mode, and the driver reports "Failed to map ion phy due to same phys".

Answer: External input buffers refer to reusing ION Buffers requested by other modules/programs through memory mapping, reducing memory allocation and copying. Users need to pay attention to the following scenario limitations when using external input buffer mode:

-

The same buffer address information cannot be given to two different encoding/decoding instances for mapping.

-

External buffers should not be dynamically allocated and released to avoid overlapping addresses causing errors. It is recommended to allocate a fixed number of buffers and reuse them cyclically, releasing them uniformly after the instance exits.

Internal Buffer Allocation Failure

Issue Background: Users experience memory allocation failures when using multiple encoding/decoding channels.

Answer: After allocating memory from ion, libmm also performs map and import operations to obtain iova and vaddr addresses. However, memory allocation failures may occur due to reaching system ion memory limits, process fd limits, or internal buffer pool limits.

-

Ion memory limit: Reduce the number of channels or optimize system ion memory usage.

-

Process fd limit: Adjust the process fd limit using

ulimit -n. -

Internal buffer pool limit: This may occur when decoding specific streams. It is recommended to use streams with a limited dpb size (

max_dec_frame_buffering).

VPU Encoding/Decoding Issues

Possible Causes of Dequeue Output Buffer Timeout

Issue Background: During encoding/decoding, what could cause a dequeue output buffer timeout?

Answer:

-

When CPU pressure is high, it can lead to frequent thread scheduling, causing delays in related work thread scheduling.

-

After the first few dequeue outputs, failing to return buffers promptly via queue output (asymmetric interface operations) can cause this.

-

Directly dequeuing output when there are no input buffers.

Timeout When Obtaining the First Frame During Video Decoding

Issue Background: When calling dequeue/queue inputbuffer/outputbuffer interfaces serially, why does the first dequeue outputbuffer always timeout?

Answer: Due to hardware characteristics, when decoding h265, the first frame will output an empty frame, delaying the data output by one frame.

First Frame PTS Misalignment During Video Decoding

Issue Background: The PTS of the first frame retrieved during decoding is always 0, inconsistent with the assigned value. The PTS only matches the second input frame when the second frame is retrieved.

Answer: When the first frame header information and the IDR frame are sent separately, first frame PTS alignment is supported. When sent together, due to hardware processing characteristics, the first frame PTS will be 0.

"FAILED TO DEC_PIC_HDR: ret(1) SEQERR(00005000)" Error During Decoding

Issue Background: During video decoding, the error "FAILED TO DEC_PIC_HDR: ret(1) SEQERR(00005000)" appears.

Answer: This is a frame header parsing error. When decoding, the first frame typically contains VPS, SPS, PPS information. However, due to format issues with some streams, providing VPS, SPS, PPS alone may not allow for normal decoding. In such cases, VPS+SPS+PPS+IDR needs to be provided together.

"Bitstream buffer is too small" Error During Encoding

Issue Background: During encoding, the error "Bitstream buffer is too small" appears.

Answer: This is caused by setting bitstream_buf_size too small. Increase the size value.

"Failed to VPU_EncRegisterFrameBuffer (1)" Error During Encoding

Issue Background: During encoding, the error "Failed to VPU_EncRegisterFrameBuffer (1)" appears.

Answer: This is caused by setting vlc_buf_size too small. Increase the size value.

Video Color Range Issue

Issue Background: When using the VPU for H265 encoding on the S100, does the encoder force conversion to limit range (TV range) output?

Test Method: Playing back a 265 stream encoded by an S100 directly with ffmpeg shows it parsed as TV range.

Resolution: The codec cannot distinguish between full range and limit range, nor can it identify whether an image is full range or limit range. Only the user knows the input range. The codec processes pixel values within the [0,255] range based on the input directly, without conversion. Decoding also does not perform conversion. However, ffmpeg has a swscaler module that can perform conversions. yuv420p is limit range, yuvj420p is full range. Internally, it converts input and output formats based on video full range information.

Therefore, if users know whether their input is full range or limit range, they can set VUI information. This allows ffmpeg to determine the output format based on the VUI information during decoding to decide whether transcoding is needed.

Currently, based on user requirements, the default color range mode in VUI information has been changed to full range.

Abnormal Stripes When Encoding Large Areas of Blue Sky

Issue Background: When encoding large areas of blue sky, some abnormal stripe patterns appear. What is the cause?

Answer: The original image contains significant noise. After compression, the noise distribution becomes irregular, appearing as stripes. This is actually caused by compressing the noise, a characteristic of the codec hardware that cannot be eliminated.

CBR or AVBR Mode Output Bitrate Not Meeting Expectations

Issue Background: When using CBR or AVBR modes, users find that the actual output stream bitrate deviates from the set target bitrate by more than 10%.

Answer:

-

For all I-frame encoding (

gop_preset_idx=1), the I-frame interval (intra_period) must be set to 1. -

Check if the target bitrate setting is reasonable. Adjusting quality parameters has upper and lower limits. (For testing reference only: For h264, set bitrate with a 1:100 compression ratio; for h265, set bitrate with a 1:150 compression ratio.)

-

Ensure enough frames are encoded, as the rate control process requires time to adjust quality parameters.

High Priority Encoding Limitations

In multi-stream encoding scenarios, a specific stream can be assigned high priority for preferential processing to achieve minimal latency. This is done by setting the priority parameter of the low-latency encoding task to 31 (high priority). Example:

media_codec_context_t context;

context.priority = PRIO_31;

However, the following limitations should be noted:

-

The low-latency target cannot be guaranteed when multiple high-priority tasks exist.

-

Users must ensure a proper software scheduling environment for high-priority task programs to maintain stable latency. (Suggestion: Keep total CPU load below 90%. Change the scheduling policy of the thread creating the high-priority encoding task to FIFO, and set the priority to 20.)

-

When DDR load is high in the business scenario and VPU has low priority on the bus, bandwidth competition can affect hardware processing latency. (Suggestion: Ensure write bandwidth traffic with higher priority than VPU does not exceed 45% of DDR load. Setting

rt_task_expect_latency_msin the sysfs node to 0 can reduce instruction queuing.)

JPU Encoding/Decoding Issues

Green Edge Appears When Viewing 1080p Images with JPG Tools

Issue Background: When using jpg tools to view a 1920x1080 image, a green edge appears at the bottom.

Explanation: This is because the IP performs encoding with 16-bit alignment. If the final part is 8-bit aligned instead of 16-bit aligned, the encoder pads the remaining area. This padded data is randomly generated and not valid data.

Different MD5 After Multiple Encodings of a 1080p Image

Issue Background: When the same 1080p yuv image is encoded multiple times, even with identical encoding parameters, the resulting jpg images may have different md5 results.

Cause: Because the IP performs encoding with 16-bit alignment. If the final part is 8-bit aligned instead of 16-bit aligned, the encoder pads the remaining area. This padded data is randomly generated and not valid data.

External Input Buffer Mapping Count Limit

Issue Background: When using external input buffer mode (zero-copy), passing more than 32 buffer addresses results in the error "Fail to get map idx".

Answer: Libmm internally caches jpg input buffer mapping information. The default upper limit is 32, with unmapping performed uniformly upon exit. It is recommended to allocate a limited number of buffers and reuse them cyclically.

Codec API

MediaCodec Interface Description

The MediaCodec module is primarily used for audio, video, and JPEG image encoding/decoding. This module provides a set of interfaces to facilitate user input of data to be processed and retrieval of processed data. It supports multiple concurrent encoding or decoding instances. Video and JPEG image encoding/decoding are hardware-accelerated, requiring users to link against libmultimedia.so. Audio encoding/decoding is software-based using FFmpeg interfaces, requiring users to link against libffmedia.so. The table below lists the video and audio codecs supported on RDK S100. Note that AAC encoding/decoding requires a license; users must obtain proper authorization before enabling related features.

H.265 supports up to Main profile and Level 5.1, with Main-tier support. H.264 supports up to High profile and Level 5.2. MJPEG/JPEG supports ISO/IEC 10918-1 Baseline sequential. Supported audio codecs include: G.711 A-law/Mu-law, G.729 ADPCM, ADPCM IMA WAV, FLAC, AAC LC, AAC Main, AAC SSR, AAC LTP, AAC LD, AAC HE, and AAC HEv2 (AAC requires a license).

Additionally, video and image sources include two types: images from VIO input and user-provided YUV data (which may be loaded from files or received over a network). Audio sources include two types: audio from MIC input (digitized by the Audio Codec) and user-provided PCM data (which may be loaded from files or received over a network).

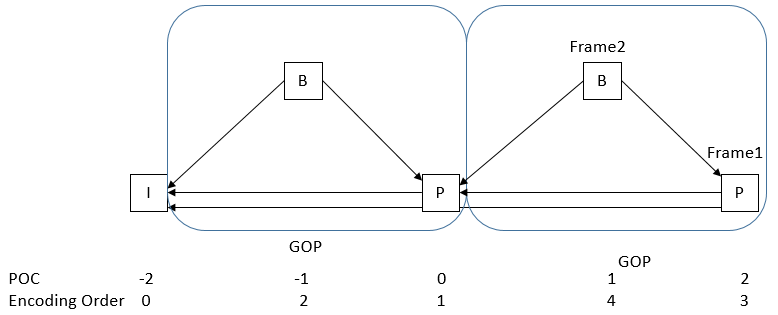

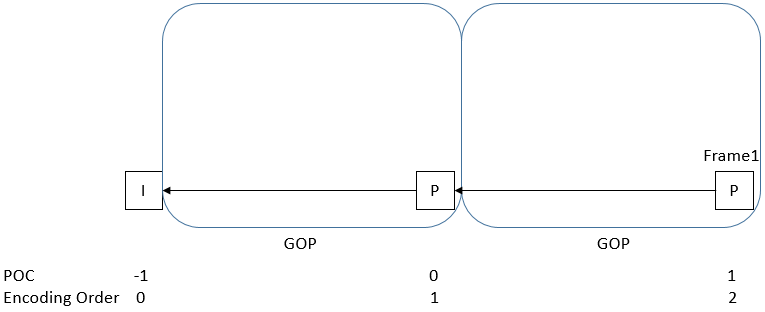

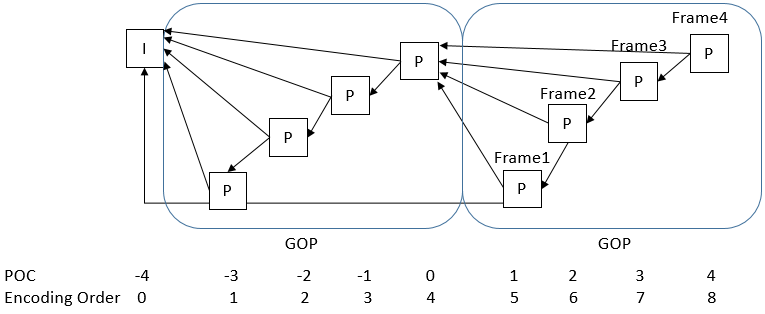

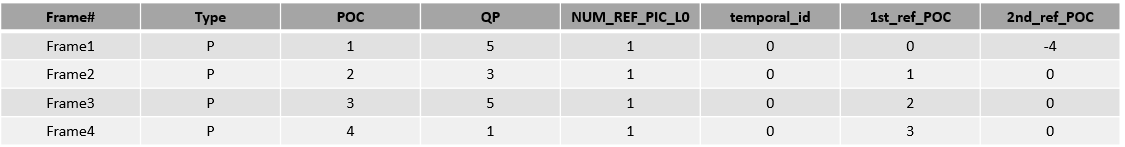

GOP

H.264/H.265 encoding supports GOP structure configuration. Users can select from nine predefined GOP structures or define custom GOP structures.

GOP Structure Table

A GOP structure table defines a periodic GOP pattern used throughout the encoding process. Elements in a single structure table are described below. Users can specify reference frames for each picture. If a frame after an IDR references a frame before the IDR, the encoder automatically handles this to avoid invalid references—users need not worry about such cases. When defining a custom GOP, users must specify the number of structure tables (up to 3), and tables must be ordered in decoding sequence.

| Element | Description |

|---|---|

| Type | Slice type (I, P) |

| POC | Display order of the frame within a GOP, ranging from 1 to GOP size |

| QPoffset | Quantization parameter offset for the picture in the custom GOP |

| NUM_REF_PIC_L0 | Flag to enable multi-reference pictures for P frames. Valid only if PIC_TYPE is P |

| temporal_id | Temporal layer of the frame. A frame cannot reference another frame with a higher temporal_id (range: 0–6). |

| 1st_ref_POC | POC of the first reference picture in L0 |

| 2nd_ref_POC | POC of the first reference picture in L1 if Type is B; POC of the second reference picture in L0 if Type is P. Note that reference_L1 can share the same POC as reference_L0 in B slices, but for better compression efficiency, it is recommended that reference_L1 and reference_L0 have different POCs. |

Predefined GOP Structures

| Index | GOP Structure | Low Delay (encoding order = display order) | GOP Size | Encoding Order | Minimum Source Frame Buffer | Minimum Decoded Picture Buffer | Intra Period (I Frame Interval) Requirement |

|---|---|---|---|---|---|---|---|

| 1 | I | Yes | 1 | I0-I1-I2… | 1 | 1 | |

| 2 | P | Yes | 1 | P0-P1-P2… | 1 | 2 | |

| 3 | B | Yes | 1 | B0-B1-B2… | 1 | 3 | |

| 4 | BP | No | 2 | B1-P0-B3-P2… | 1 | 3 | Multiple of 2 |

| 5 | BBBP | Yes | 1 | B2-B1-B3-P0… | 7 | 4 | Multiple of 4 |

| 6 | PPPP | Yes | 4 | P0-P1-P2-P3… | 1 | 2 | |

| 7 | BBBB | Yes | 4 | B0-B1-B2-B3… | 1 | 3 | |

| 8 | BBBB BBBB | Yes | 1 | B3-B2-B4-B1-B6-B5-B7-B0… | 12 | 5 | Multiple of 8 |

| 9 | P | Yes | 1 | P0 | 1 | 2 |

The following describes the nine predefined GOP structures.

GOP Preset 1

- Contains only I-frames with no inter-frame references;

- Low latency;

GOP Preset 2

- Contains only I-frames and P-frames;

- P-frames reference two previous frames;

- Low latency;

GOP Preset 3

- Contains only I-frames and B-frames;

- B-frames reference two previous frames;

- Low latency;

GOP Preset 4

- Contains I-, P-, and B-frames;

- P-frames reference two previous frames;

- B-frames reference one previous and one future frame;

GOP Preset 5

- Contains I-, P-, and B-frames;

- P-frames reference two previous frames;

- B-frames reference one previous and one future frame (which can be either P or B);

GOP Preset 6

- Contains only I-frames and P-frames;

- P-frames reference two previous frames;

- Low latency;

GOP Preset 7

- Contains only I-frames and B-frames;

- B-frames reference two previous frames;

- Low latency;

GOP Preset 8

- Contains only I-frames and B-frames;

- B-frames reference one previous and one future frame;

GOP Preset 9

- Contains only I-frames and P-frames;

- Each P-frame references one forward reference frame;

- Low latency;

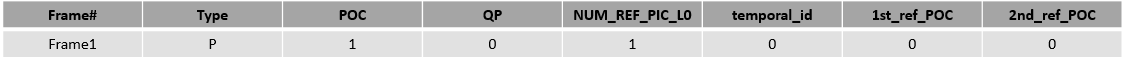

Long-term Reference Frames

Users can specify the interval for long-term reference frames and the interval at which frames reference long-term reference frames, as shown in the figure below:

Intra Refresh

Intra Refresh mode enhances error resilience by periodically inserting intra-coded MBs/CTUs within non-I frames. It provides the decoder with more recovery points to prevent image corruption caused by temporal errors. Users can specify the number of consecutive rows, columns, or step size of MBs/CTUs to force the encoder to insert intra-coded units. Additionally, users may specify the size of intra-coded units, allowing the encoder to internally determine which blocks require intra coding.

Rate Control

MediaCodec supports bitrate control for H.264, H.265, and MJPEG codecs. For H.264 and H.265 encoding channels, it supports five rate control modes: CBR, VBR, AVBR, FixQp, and QpMap. For MJPEG encoding channels, it supports FixQp mode.

- CBR ensures a stable overall encoded bitrate;

- VBR maintains consistent visual quality;

- AVBR balances both bitrate and quality, producing a relatively stable stream in terms of both bitrate and image quality;

- FixQp fixes the QP value for every I-frame and P-frame;

- QpMap assigns a specific QP value to each block within a frame (block size is 32×32 for H.265 and 16×16 for H.264).

For CBR and AVBR, the encoder internally determines an appropriate QP value for each frame to maintain constant bitrate. The encoder supports three levels of rate control: frame-level, CTU/MB-level, and subCTU/subMB-level.

- Frame-level control calculates a single QP per frame based on the target bitrate to ensure bitrate stability.

- CTU/MB-level control assigns a QP to each 64×64 CTU or 16×16 MB according to its target bitrate, achieving finer bitrate control, though frequent QP adjustments may cause visual quality instability.

- subCTU/subMB-level control assigns a QP to each 32×32 subCTU or 8×8 subMB. Complex blocks receive higher QP values, while static blocks receive lower QP values—since the human eye is more sensitive to static regions than complex ones. Detection of complex vs. static regions relies on internal hardware modules. This level aims to improve subjective visual quality while maintaining bitrate stability, resulting in higher SSIM scores but potentially lower PSNR scores.

ROI

ROI encoding works similarly to QpMap: users must assign a QP value to each block in raster-scan order, as illustrated below:

For H.264, each block is 16×16; for H.265, it is 32×32. In the ROI map, each QP value occupies one byte, ranging from 0 to 51. ROI encoding can operate alongside CBR or AVBR.

- When CBR/AVBR is disabled, the actual QP for each block equals the value specified in the ROI map.

- When CBR/AVBR is enabled, the actual QP for block i is calculated as:

QP(i) = MQP(i) + RQP(i) - ROIAvgQP

where:

- MQP is the value from the ROI map,

- RQP is the QP determined by the encoder’s internal rate control,

- ROIAvgQP is the average QP value across the entire ROI map.

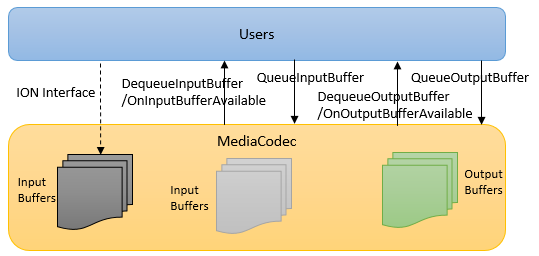

Input/Output Buffer Management

MediaCodec uses two types of buffers: input and output. Typically, these buffers are allocated uniformly by MediaCodec via the ION interface, so users do not need to manage allocation directly. Instead, users should call dequeue to obtain an available buffer before use and queue to return it after processing.

However, to reduce unnecessary buffer copying in certain scenarios—e.g., when using PYM’s output buffer directly as MediaCodec input (since PYM allocates this buffer internally via ION)—MediaCodec also supports user-allocated input buffers. In such cases, users must allocate physically contiguous buffers via the ION interface and set the external_frame_buf field in media_codec_context_t before configuring MediaCodec.

Note: Even when providing external input buffers, users must still perform dequeue to retrieve buffer metadata (e.g., virtual and physical addresses), populate this information, and then call queue.

Frame Rate Control

MediaCodec does not currently support internal frame rate control.

- If users do not enable direct VIO–MediaCodec interaction via

hb_mm_mc_set_camera, they must manually control the input buffer frame rate. - If VIO–MediaCodec interaction is enabled, users only manage output buffers; input buffering is handled automatically. In this mode, MediaCodec performs no frame rate control on input buffers. If encoding stalls or the input buffer queue becomes full, MediaCodec will wait until space becomes available before proceeding.

Frame Skip Configuration

Users can call hb_mm_mc_skip_pic to set the next queued input frame to "skip" mode. This mode applies only to non-I frames. In skip mode, the encoder ignores the input frame and instead generates the reconstructed frame by reusing the previous frame’s reconstruction, encoding the current input as a P-frame.

JPEG Codec Limitations

-

For JPEG/MJPEG encoding:

- With YUV420/YUV422 input: width must be 16-byte aligned, height 8-byte aligned.

- With YUV440/YUV444/YUV400 input: both width and height must be 8-byte aligned.

- If cropping is applied, the crop origin (x, y) must also be 8-byte aligned.

-

For JPEG/MJPEG encoding with 90°/270° rotation:

- YUV420: width 16-aligned, height 8-aligned.

- YUV422/YUV440: width 16-aligned, height 16-aligned.

- YUV444/YUV400: width and height 8-aligned.

- With cropping:

- YUV420: crop width 16-aligned, crop height 8-aligned.

- YUV422/YUV440: crop width 16-aligned, crop height 16-aligned.

- YUV444/YUV400: crop width and height 8-aligned.

-

For JPEG/MJPEG encoding with 90°/270° rotation:

- YUV422 input becomes YUV440 after rotation.

- YUV440 input becomes YUV422 after rotation.

-

For JPEG/MJPEG decoding with rotation or mirroring:

- Output YUV format must match input format, except:

- When decoding YUV422 JPEG/MJPEG with 90°/270° rotation, output format must be YUV440p, YUYV, YVYU, UYVY, or VYUY.

- Output YUV format must match input format, except:

-

JPEG/MJPEG decoding: Rotation/mirroring cannot be used simultaneously with cropping.

-

JPEG/MJPEG decoding: Output buffer dimensions must align with the input format’s MCU width and height. If cropping is enabled, all crop parameters (origin x/y and width/height) must also align with the MCU dimensions.

(MCU sizes: 420 → 16×16, 422 → 16×8, 440 → 8×16, 400 → 8×8, 444 → 8×8.) -

JPEG/MJPEG decoding: Output format Packed YUV444 requires input in YUV444 format.

-

JPEG/MJPEG decoding: Only supports

MC_FEEDING_MODE_FRAME_SIZEmode. -

JPEG encoding: If the user specifies the bitstream buffer size, an additional 4KB must be allocated.

-

JPEG encoding: Since the encoder processes data in 16×16 units, non-aligned input may result in padding differences in the final encoded data. This does not affect valid pixel data but is a hardware limitation; caution is advised when performing MD5 comparisons.

API Reference

hb_mm_mc_get_descriptor

【Function Declaration】

const media_codec_descriptor_t* hb_mm_mc_get_descriptor(media_codec_id_t codec_id);

【Parameters�】

- [IN] media_codec_id_t codec_id: Specifies the codec type.

【Return Value】

- Non-null: Codec descriptor containing information.

- NULL: No descriptor found for the given codec ID.

【Description】

Retrieves codec information supported by MediaCodec based on codec_id, including codec name, detailed description, MIME type, and supported profiles.

【Example】

#include "hb_media_codec.h"

#include "hb_media_error.h"

int main(int argc, char *argv[])

{

const media_codec_descriptor_t *desc = NULL;

desc = hb_mm_mc_get_descriptor(MEDIA_CODEC_ID_H265);

return 0;

}

hb_mm_mc_get_default_context

【Function Declaration】

hb_s32 hb_mm_mc_get_default_context(media_codec_id_t codec_id, hb_bool encoder, media_codec_context_t *context);

【Parameters】

- [IN] media_codec_id_t codec_id: Specifies the codec type.

- [IN] hb_bool encoder: Indicates whether the codec is an encoder (

true) or decoder (false). - [OUT] media_codec_context_t *context: Receives the default context for the specified codec.

【Return Value】

- 0: Success.

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters.

【Description】

Obtains the default configuration attributes for a specified codec.

【Example】

#include "hb_media_codec.h"

#include "hb_media_error.h"

int main(int argc, char *argv[])

{

int ret = 0;

media_codec_context_t context;

memset(&context, 0x00, sizeof(context));

ret = hb_mm_mc_get_default_context(MEDIA_CODEC_ID_H265, 1, &context);

return 0;

}

hb_mm_mc_initialize

【Function Declaration】

hb_s32 hb_mm_mc_initialize(media_codec_context_t *context)

【Parameters】

- [IN] media_codec_context_t *context: Context configuration for the codec.

【Return Values】

- 0: Success.

- HB_MEDIA_ERR_UNKNOWN: Unknown error.

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters.

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not permitted.

- HB_MEDIA_ERR_INSUFFICIENT_RES: Insufficient internal memory resources.

- HB_MEDIA_ERR_NO_FREE_INSTANCE: No free instance available (max: 32 for Video, 64 for MJPEG/JPEG, 32 for Audio).

【Description】

Initializes an encoder or decoder. Upon success, MediaCodec enters the MEDIA_CODEC_STATE_INITIALIZED state.

【Example】

#include "hb_media_codec.h"

#include "hb_media_error.h"

static Uint64 osal_gettime(void)

{

struct timespec tp;

clock_gettime(CLOCK_MONOTONIC, &tp);

return ((Uint64)tp.tv_sec*1000 + tp.tv_nsec/1000000);

}

typedef struct MediaCodecTestContext {

media_codec_context_t *context;

char *inputFileName;

char *outputFileName;

} MediaCodecTestContext;

typedef struct AsyncMediaCtx {

media_codec_context_t *ctx;

FILE *inFile;

FILE *outFile;

int lastStream;

Uint64 startTime;

int32_t duration;

} AsyncMediaCtx;

static void on_encoder_input_buffer_available(hb_ptr userdata,

media_codec_buffer_t *inputBuffer) {

AsyncMediaCtx *asyncCtx = (AsyncMediaCtx *)userdata;

Int noMoreInput = 0;

hb_s32 ret = 0;

Uint64 curTime = 0;

if (!noMoreInput) {

curTime = osal_gettime();

if ((curTime - asyncCtx->startTime)/1000 < (uint32_t)asyncCtx->duration) {

ret = fread(inputBuffer->vframe_buf.vir_ptr[0], 1,

inputBuffer->vframe_buf.size, asyncCtx->inFile);

if (ret <= 0) {

if(fseek(asyncCtx->inFile, 0, SEEK_SET)) {

printf("Failed to rewind input file\n");

} else {

ret = fread(inputBuffer->vframe_buf.vir_ptr[0], 1,

inputBuffer->vframe_buf.size, asyncCtx->inFile);

if (ret <= 0) {

printf("Failed to read input file\n");

}

}

}

}

if (!ret) {

printf("%s There is no more input data!\n", TAG);

inputBuffer->vframe_buf.frame_end = TRUE;

noMoreInput = 1;

} else {

inputBuffer->vframe_buf.frame_end = TRUE;

inputBuffer->vframe_buf.size = 0;

}

}

static void on_encoder_output_buffer_available(hb_ptr userdata,

media_codec_buffer_t *outputBuffer,

media_codec_output_buffer_info_t *extraInfo) {

AsyncMediaCtx *asyncCtx = (AsyncMediaCtx *)userdata;

mc_265_output_stream_info_t info = extraInfo->video_stream_info;

fwrite(outputBuffer->vstream_buf.vir_ptr,

outputBuffer->vstream_buf.size, 1, asyncCtx->outFile);

if (outputBuffer->vstream_buf.stream_end) {

printf("There is no more output data!\n");

asyncCtx->lastStream = 1;

}

}

static void on_encoder_media_codec_message(hb_ptr userdata, hb_s32

error) {

AsyncMediaCtx *asyncCtx = (AsyncMediaCtx *)userdata;

if (error) {

asyncCtx->lastStream = 1;

printf("ERROR happened!\n");

}

}

static void on_vlc_buffer_message(hb_ptr userdata, hb_s32 * vlc_buf)

{

MediaCodecTestContext *ctx = (MediaCodecTestContext *)userdata;

printf("%s %s VLC Buffer size = %d; Reset to %d.\n", TAG,

__FUNCTION__,

*vlc_buf, ctx->vlc_buf_size);

*vlc_buf = ctx->vlc_buf_size;

}

static void do_async_encoding(void *arg) {

hb_s32 ret = 0;

FILE *outFile;

FILE *inFile;

int step = 0;

AsyncMediaCtx asyncCtx;

MediaCodecTestContext *ctx = (MediaCodecTestContext *)arg;

media_codec_context_t *context = ctx->context;

char *inputFileName = ctx->inputFileName;

char *outputFileName = ctx->outputFileName;

media_codec_state_t state = MEDIA_CODEC_STATE_NONE;

inFile = fopen(inputFileName, "rb");

if (!inFile) {

goto ERR;

}

outFile = fopen(outputFileName, "wb");

if (!outFile) {

goto ERR;

}

memset(&asyncCtx, 0x00, sizeof(AsyncMediaCtx));

asyncCtx.ctx = context;

asyncCtx.inFile = inFile;

asyncCtx.outFile = outFile;

asyncCtx.lastStream = 0;

asyncCtx.duration = 5;

asyncCtx.startTime = osal_gettime();

ret = hb_mm_mc_initialize(context);

if (ret) {

goto ERR;

}

media_codec_callback_t callback;

callback.on_input_buffer_available =

on_encoder_input_buffer_available;

callback.on_output_buffer_available =

on_encoder_output_buffer_available;

callback.on_media_codec_message = on_encoder_media_codec_message;

ret = hb_mm_mc_set_callback(context, &callback, &asyncCtx);

if (ret) {

goto ERR;

}

media_codec_callback_t callback2;

callback2.on_vlc_buffer_message = on_vlc_buffer_message;

if (ctx->vlc_buf_size > 0) {

ret = hb_mm_mc_set_vlc_buffer_listener(context, &callback2, ctx);

if (ret) {

goto ERR;

}

}

ret = hb_mm_mc_configure(context);

if (ret) {

goto ERR;

}

mc_av_codec_startup_params_t startup_params;

startup_params.video_enc_startup_params.receive_frame_number = 0;

ret = hb_mm_mc_start(context, &startup_params);

if (ret) {

goto ERR;

}

while(!asyncCtx.lastStream) {

sleep(1);

}

hb_mm_mc_stop(context);

hb_mm_mc_release(context);

context = NULL;

ERR:

hb_mm_mc_get_state(context, &state);

if (context && state !=

MEDIA_CODEC_STATE_UNINITIALIZED) {

hb_mm_mc_stop(context);

hb_mm_mc_release(context);

}

if (inFile)

fclose(inFile);

if (outFile)

fclose(outFile);

}

int main(int argc, char *argv[])

{

int ret = 0;

char outputFileName[MAX_FILE_PATH] = "./tmp.yuv";

char inputFileName[MAX_FILE_PATH] = "./output.h265";

mc_video_codec_enc_params_t *params = NULL;

media_codec_context_t context;

memset(&context, 0x00, sizeof(media_codec_context_t));

context.codec_id = MEDIA_CODEC_ID_H265;

context.encoder = 1;

params = &context.video_enc_params;

params->width = 640;

params->height = 480;

params->pix_fmt = MC_PIXEL_FORMAT_YUV420P;

params->frame_buf_count = 5;

params->external_frame_buf = 0;

params->bitstream_buf_count = 5;

params->rc_params.mode = MC_AV_RC_MODE_H265CBR;

ret = hb_mm_mc_get_rate_control_config(&context, ¶ms->rc_params);

if (ret) {

return -1;

}

params->rc_params.h265_cbr_params.bit_rate = 5000;

params->rc_params.h265_cbr_params.frame_rate = 30;

params->rc_params.h265_cbr_params.intra_period = 30;

params->gop_params.decoding_refresh_type = 2;

params->gop_params.gop_preset_idx = 2;

params->rot_degree = MC_CCW_0;

params->mir_direction = MC_DIRECTION_NONE;

params->frame_cropping_flag = FALSE;

MediaCodecTestContext ctx;

memset(&ctx, 0x00, sizeof(ctx));

ctx.context = &context;

ctx.inputFileName = inputFileName;

ctx.outputFileName = outputFileName;

do_async_encoding(&ctx);

return 0;

}

hb_mm_mc_set_callback

【Function Declaration】

hb_s32 hb_mm_mc_set_callback(media_codec_context_t *context, const media_codec_callback_t *callback, hb_ptr userdata)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] const media_codec_callback_t *callback: User-defined callback functions

- [IN] hb_ptr userdata: Pointer to user data, which will be passed as an argument when the callback function is invoked

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

【Function Description】

Sets the callback function pointers. After calling this function, MediaCodec enters asynchronous operation mode.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_configure

【Function Declaration】

hb_s32 hb_mm_mc_configure(media_codec_context_t *context)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INSUFFICIENT_RES: Insufficient internal memory resources

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Configures the encoder or decoder based on the input information. Upon successful invocation, MediaCodec enters the MEDIA_CODEC_STATE_CONFIGURED state.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_start

【Function Declaration】

hb_s32 hb_mm_mc_start(media_codec_context_t *context, const

mc_av_codec_startup_params_t *info)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] mc_av_codec_startup_params_t *info: Startup parameters for audio/video encoding or decoding

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INSUFFICIENT_RES: Insufficient internal memory resources

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Starts the encoding/decoding process. MediaCodec will create the codec instance, set up sequences or parse the data stream, register Framebuffers, encode header information, etc. Upon successful invocation, MediaCodec enters the MEDIA_CODEC_STATE_STARTED state.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_stop

【Function Declaration】

hb_s32 hb_mm_mc_stop(media_codec_context_t *context)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Stops the encoding/decoding process, terminates all child threads, and releases associated resources. Upon successful invocation, MediaCodec returns to the MEDIA_CODEC_STATE_INITIALIZED state.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_pause

【Function Declaration】

hb_s32 hb_mm_mc_pause(media_codec_context_t *context)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Pauses the encoding/decoding process and suspends all child threads. Upon successful invocation, MediaCodec enters the MEDIA_CODEC_STATE_PAUSED state.

【Example Code】

Refer to hb_mm_mc_queue_input_buffer

hb_mm_mc_flush

【Function Declaration】

hb_s32 hb_mm_mc_flush(media_codec_context_t *context)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Flushes the input and output buffer queues, forcing the encoder/decoder to flush any unprocessed input or output buffers. After this function is called, MediaCodec enters the MEDIA_CODEC_STATE_FLUSHING state. Upon successful completion, MediaCodec returns to the MEDIA_CODEC_STATE_STARTED state.

【Example Code】

Refer to hb_mm_mc_queue_input_buffer

hb_mm_mc_release

【Function Declaration】

hb_s32 hb_mm_mc_release(media_codec_context_t *context)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

【Function Description】

Releases all internal resources held by MediaCodec. The user must call hb_mm_mc_stop to stop encoding/decoding before invoking this function. Upon successful completion, MediaCodec enters the MEDIA_CODEC_STATE_UNINITIALIZED state.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_get_state

【Function Declaration】

hb_s32 hb_mm_mc_get_state(media_codec_context_t *context,

media_codec_state_t *state)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [OUT] media_codec_state_t *state: Current state of MediaCodec

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

【Function Description】

Retrieves the current state of MediaCodec.

【Example Code】

Refer to hb_mm_mc_initialize

hb_mm_mc_get_status

【Function Declaration】

hb_s32 hb_mm_mc_get_status(media_codec_context_t *context,

mc_inter_status_t *status)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [OUT] mc_inter_status_t *status: Current internal status of MediaCodec

【Return Value】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

【Function Description】

Obtain the current internal state information of MediaCodec.

【Example Code】

Refer to hb_mm_mc_get_fd

hb_mm_mc_queue_input_buffer

【Function Declaration】

hb_s32 hb_mm_mc_queue_input_buffer(media_codec_context_t

*context, media_codec_buffer_t *buffer, hb_s32 timeout)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] media_codec_buffer_t *buffer: Input buffer information

- [IN] hb_s32 timeout: Timeout duration

【Return Value】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_INVALID_BUFFER: Invalid buffer

- HB_MEDIA_ERR_WAIT_TIMEOUT: Wait timeout

【Function Description】

Submit a buffer requiring processing into MediaCodec.

【Example Code】

#include "hb_media_codec.h"

#include "hb_media_error.h"

typedef struct MediaCodecTestContext {

media_codec_context_t *context;

char *inputFileName;

char *outputFileName;

int32_t duration; // s

} MediaCodecTestContext;

Uint64 osal_gettime(void)

{

struct timespec tp;

clock_gettime(CLOCK_MONOTONIC, &tp);

return ((Uint64)tp.tv_sec*1000 + tp.tv_nsec/1000000);

}

static void do_sync_encoding(void *arg) {

hb_s32 ret = 0;

FILE *inFile;

FILE *outFile;

int noMoreInput = 0;

int lastStream = 0;

Uint64 lastTime = 0;

Uint64 curTime = 0;

int needFlush = 1;

MediaCodecTestContext *ctx = (MediaCodecTestContext *)arg;

media_codec_context_t *context = ctx->context;

char *inputFileName = ctx->inputFileName;

char *outputFileName = ctx->outputFileName;

media_codec_state_t state = MEDIA_CODEC_STATE_NONE;

inFile = fopen(inputFileName, "rb");

if (!inFile) {

goto ERR;

}

outFile = fopen(outputFileName, "wb");

if (!outFile) {

goto ERR;

}

//get current time

lastTime = osal_gettime();

ret = hb_mm_mc_initialize(context);

if (ret) {

goto ERR;

}

ret = hb_mm_mc_configure(context);

if (ret) {

goto ERR;

}

mc_av_codec_startup_params_t startup_params;

startup_params.video_enc_startup_params.receive_frame_number = 0;

ret = hb_mm_mc_start(context, &startup_params);

if (ret) {

goto ERR;

}

ret = hb_mm_mc_pause(context);

if (ret) {

goto ERR;

}

do {

if (!noMoreInput) {

media_codec_buffer_t inputBuffer;

memset(&inputBuffer, 0x00, sizeof(media_codec_buffer_t));

ret = hb_mm_mc_dequeue_input_buffer(context, &inputBuffer, 100);

if (!ret) {

curTime = osal_gettime();

if ((curTime - lastTime)/1000 < (uint32_t)ctx->duration) {

ret = fread(inputBuffer.vframe_buf.vir_ptr[0], 1,

inputBuffer.vframe_buf.size, inFile);

if (ret <= 0) {

if(fseek(inFile, 0, SEEK_SET)) {

printf("Failed to rewind input file\n");

} else {

ret = fread(inputBuffer.vframe_buf.vir_ptr[0], 1,

inputBuffer.vframe_buf.size, inFile);

if (ret <= 0) {

printf("Failed to read input file\n");

}

}

}

} else {

printf("Time up(%d)\n",ctx->duration);

ret = 0;

}

if (!ret) {

printf("There is no more input data!\n");

inputBuffer.vframe_buf.frame_end = TRUE;

noMoreInput = 1;

}

ret = hb_mm_mc_queue_input_buffer(context, &inputBuffer, 100);

if (ret) {

printf("Queue input buffer fail.\n");

break;

} else {

if (ret != (int32_t)HB_MEDIA_ERR_WAIT_TIMEOUT) {

printf("Dequeue input buffer fail.\n");

break;

}

}

if (!lastStream) {

media_codec_buffer_t outputBuffer;

media_codec_output_buffer_info_t info;

memset(&outputBuffer, 0x00, sizeof(media_codec_buffer_t));

memset(&info, 0x00, sizeof(media_codec_output_buffer_info_t));

ret = hb_mm_mc_dequeue_output_buffer(context, &outputBuffer, &info,

3000);

if (!ret && outFile) {

fwrite(outputBuffer.vstream_buf.vir_ptr,

outputBuffer.vstream_buf.size, 1, outFile);

ret = hb_mm_mc_queue_output_buffer(context, &outputBuffer, 100);

if (ret) {

printf("Queue output buffer fail.\n");

break;

}

if (outputBuffer.vstream_buf.stream_end) {

printf("There is no more output data!\n");

lastStream = 1;

break;

}

} else {

if (ret != (int32_t)HB_MEDIA_ERR_WAIT_TIMEOUT) {

printf("Dequeue output buffer fail.\n");

break;

}

}

}

if (needFlush) {

ret = hb_mm_mc_flush(context);

needFlush = 0;

if (ret) {

break;

}

}

}while(TRUE);

hb_mm_mc_stop(context);

hb_mm_mc_release(context);

context = NULL;

ERR:

hb_mm_mc_get_state(context, &state);

if (context && state !=

MEDIA_CODEC_STATE_UNINITIALIZED) {

hb_mm_mc_stop(context);

hb_mm_mc_release(context);

}

if (inFile)

fclose(inFile);

if (outFile)

fclose(outFile);

}

int main(int argc, char *argv[])

{

hb_s32 ret = 0;

char outputFileName[MAX_FILE_PATH] = "./tmp.yuv";

char inputFileName[MAX_FILE_PATH] = "./output.stream";

mc_video_codec_enc_params_t *params;

media_codec_context_t context;

memset(&context, 0x00, sizeof(media_codec_context_t));

context.codec_id = MEDIA_CODEC_ID_H265;

context.encoder = TRUE;

params = &context.video_enc_params;

params->width = 640;

params->height = 480;

params->pix_fmt = MC_PIXEL_FORMAT_YUV420P;

params->frame_buf_count = 5;

params->external_frame_buf = FALSE;

params->bitstream_buf_count = 5;

params->rc_params.mode = MC_AV_RC_MODE_H265CBR;

ret = hb_mm_mc_get_rate_control_config(&context, ¶ms->rc_params);

if (ret) {

return -1;

}

params->rc_params.h265_cbr_params.bit_rate = 5000;

params->rc_params.h265_cbr_params.frame_rate = 30;

params->rc_params.h265_cbr_params.intra_period = 30;

params->gop_params.decoding_refresh_type = 2;

params->gop_params.gop_preset_idx = 2;

params->rot_degree = MC_CCW_0;

params->mir_direction = MC_DIRECTION_NONE;

params->frame_cropping_flag = FALSE;

MediaCodecTestContext ctx;

memset(&ctx, 0x00, sizeof(ctx));

ctx.context = &context;

ctx.inputFileName = inputFileName;

ctx.outputFileName = outputFileName;

ctx.duration = 5;

do_sync_encoding(&ctx);

}

hb_mm_mc_dequeue_input_buffer��

【Function Declaration】

hb_s32 hb_mm_mc_dequeue_input_buffer(media_codec_context_t *context, media_codec_buffer_t *buffer, hb_s32 timeout)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] hb_s32 timeout: Timeout duration

- [OUT] media_codec_buffer_t *buffer: Input buffer information

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_INVALID_BUFFER: Invalid buffer

- HB_MEDIA_ERR_WAIT_TIMEOUT: Wait timeout

【Function Description】

Obtain an input buffer.

【Example Code】

Refer to hb_mm_mc_queue_input_buffer

hb_mm_mc_queue_output_buffer

【Function Declaration】

hb_s32 hb_mm_mc_queue_output_buffer(media_codec_context_t *context, media_codec_buffer_t *buffer, hb_s32 timeout)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] media_codec_buffer_t *buffer: Output buffer information

- [IN] hb_s32 timeout: Timeout duration

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_INVALID_BUFFER: Invalid buffer

- HB_MEDIA_ERR_WAIT_TIMEOUT: Wait timeout

【Function Description】

Return a processed output buffer back to MediaCodec.

【Example Code】

Refer to hb_mm_mc_queue_input_buffer

hb_mm_mc_dequeue_output_buffer

【Function Declaration】

hb_s32 hb_mm_mc_dequeue_output_buffer(media_codec_context_t *context, media_codec_buffer_t *buffer, media_codec_output_buffer_info_t *info, hb_s32 timeout)

【Parameter Description】

- [IN] media_codec_context_t *context: Context specifying the codec type

- [IN] hb_s32 timeout: Timeout duration

- [OUT] media_codec_buffer_t *buffer: Output buffer information

- [IN] media_codec_output_buffer_info_t *info: Information of the output bitstream

【Return Values】

- 0: Operation succeeded

- HB_MEDIA_ERR_UNKNOWN: Unknown error

- HB_MEDIA_ERR_OPERATION_NOT_ALLOWED: Operation not allowed

- HB_MEDIA_ERR_INVALID_INSTANCE: Invalid instance

- HB_MEDIA_ERR_INVALID_PARAMS: Invalid parameters

- HB_MEDIA_ERR_INVALID_BUFFER: Invalid buffer

- HB_MEDIA_ERR_WAIT_TIMEOUT: Wait timeout

【Function Description】

Obtain an output buffer.

【Example Code】